AI Governance

32 articles tagged "AI Governance"

AI governance bridges the gap between AI innovation and enterprise requirements for security, compliance, and accountability. These articles cover governance frameworks, implementation strategies, and real-world approaches to managing AI systems at scale.

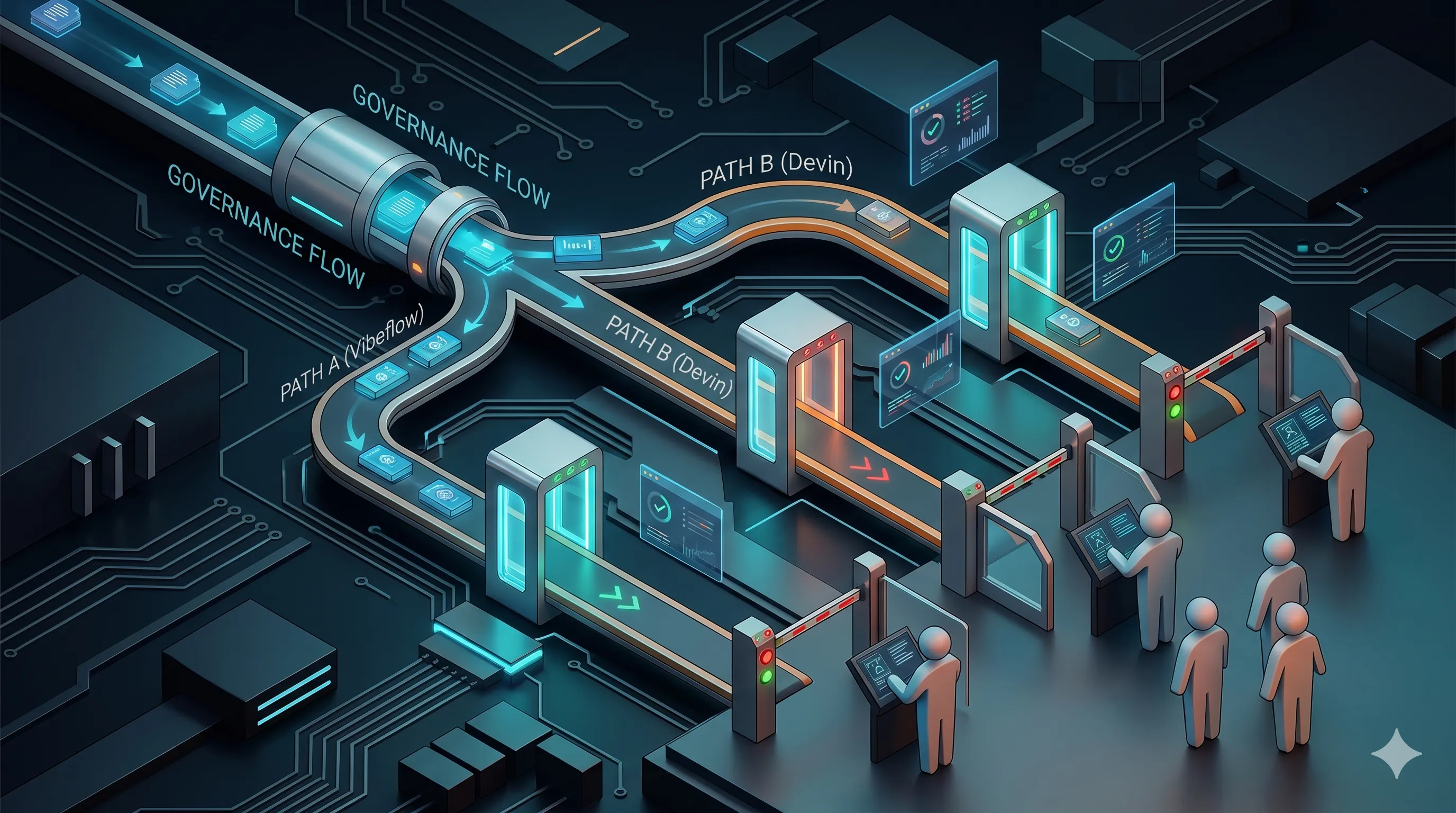

Compliance, Governance, and Review Gates: VibeFlow vs Devin vs Linear

When the auditor asks who built it and how, what does each AI development platform actually capture? Gate-by-gate and SOC 2 + NIST AI RMF mapping for VibeFlow, Devin, and Linear.

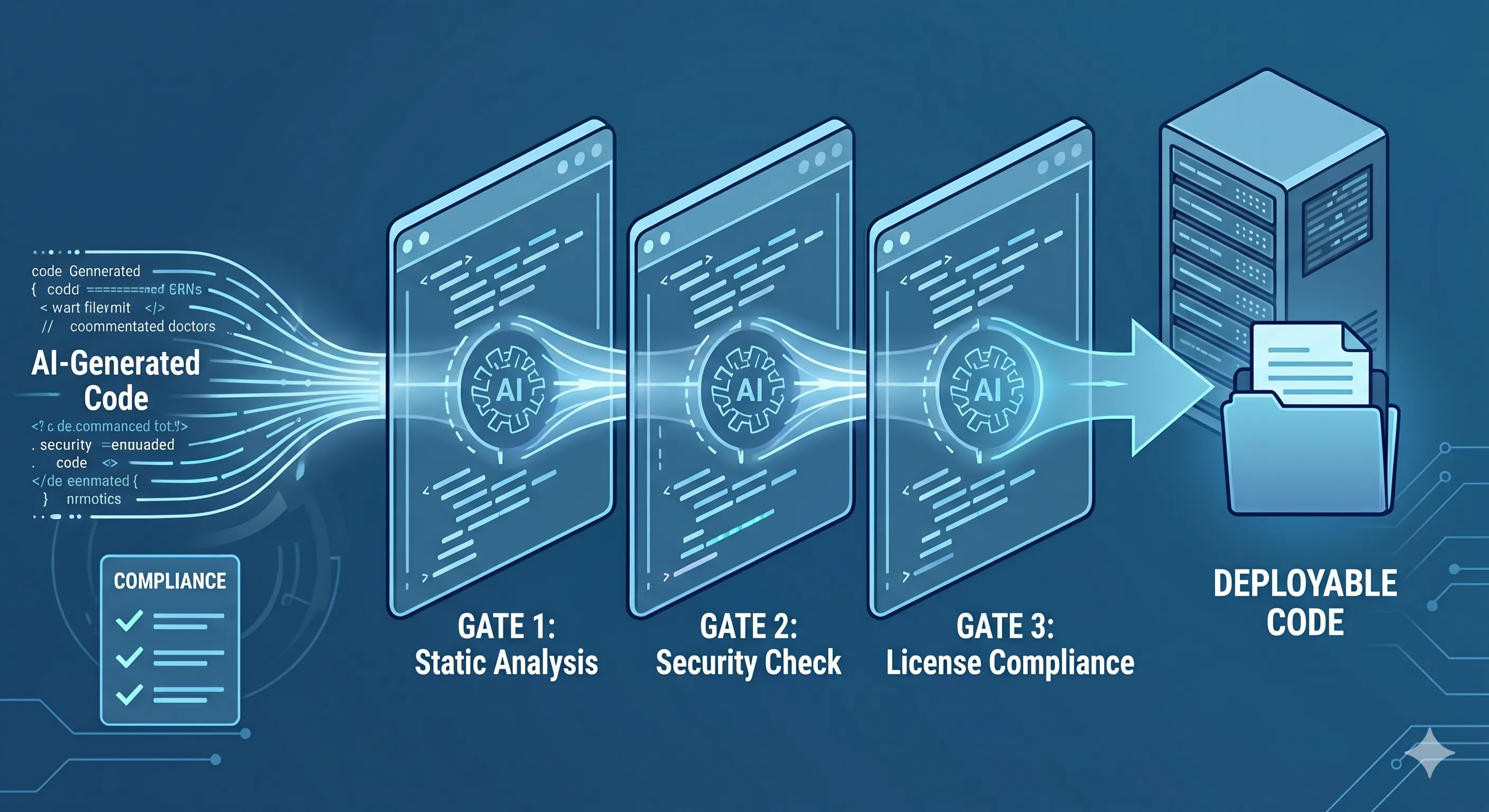

Quality Gates for AI-Generated Code: Automated Review and Compliance

AI-generated code slips through human code review at alarming rates. A four-gate pipeline — lint, security, coverage, and compliance — closes the loop before merge.

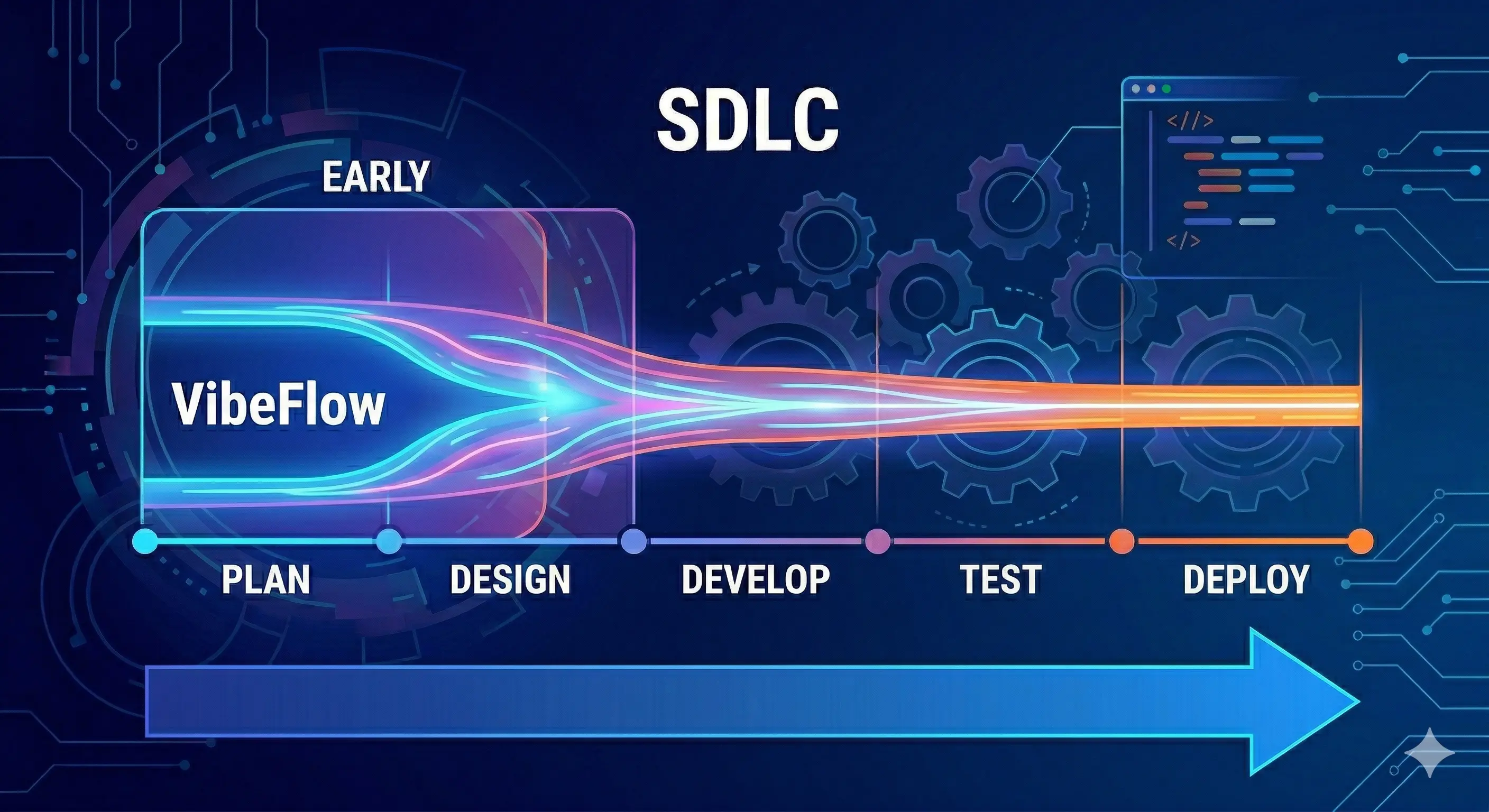

Vibecoding Shift-Left SDLC: Making VibeFlow Real for Teams

Shift-left means catching problems earlier — in design, not production; in code review, not incident response. Vibecoding makes shift-left possible at a new level of granularity. VibeFlow is the governance layer that enforces it.

Weekly AI Command: The Tech Launchpad (Week Ending April 4, 2026)

The first week of April 2026 has marked a definitive shift in the equilibrium of the AI industry. The tension between open-source accessibility and proprietary dominance has reached a boiling point. We are seeing a divergence: high-performance multimodal models are becoming available for local ex...

AI Coding at Scale: Governance Challenges Solo Tools Can't Solve

AI coding tools boost individual velocity but create organizational friction at scale. Here are the 5 challenges and the platform approach that solves them.

AI Governance Maturity Model: From Ad Hoc to Automated in 5 Levels

Most organizations are at Level 1 and don't know it. Here's a 5-level AI governance maturity model with a self-assessment checklist.

Why Enterprise Teams Outgrow Cursor and Devin

Solo AI coding tools boost individual velocity. But at scale, the governance gaps they create become bigger than the productivity gains.

CISO Guide to AI Agent Security: Threat Models for Code Agents

AI coding agents are autonomous actors in your codebase. Here are the 5 threat categories CISOs must address and the defense-in-depth controls that actually work.

AI Governance Frameworks Compared: NIST AI RMF vs EU AI Act vs ISO 42001

Compare three major AI governance frameworks side-by-side. Understand scope, enforcement, and how NIST AI RMF, EU AI Act, and ISO 42001 work together.

Building an AI Audit Trail: From Model Selection to Production

A practical guide to implementing AI audit trails. Learn the 5 layers of traceability every enterprise needs for AI-generated code.

Enterprise AI Risk Management: Beyond Checkbox Compliance

Move from reactive AI compliance to proactive risk management. A CISO's guide to the 4 AI risk categories and a 90-day governance playbook.

Weekly AI Command: The Recap (March 15-20, 2026)

The middle of March 2026 has brought the industry to a definitive crossroads. We are moving past the era of "move fast and break things" into a period defined by high-stakes friction between federal oversight, state-level legislation, and the Pentagon's demand for unrestricted access to frontier AI.

Weekly AI Command: The Tech Launchpad (March 15-20, 2026)

This was the week the AI industry stopped debating model intelligence and started fighting over who controls the desktop. Between a transformative open-source architecture release, Meta's aggressive move into local AI agents, and OpenAI's internal reckoning with product sprawl, March 15-20 made o...

What is OpenClaw? An Executive Overview & Governance Guide

OpenClaw is the fastest-growing open-source AI agent runtime, surpassing 250K GitHub stars. This executive guide covers its architecture, shadow AI risks, and how enterprises can govern local autonomous agents.

Weekly AI Command: The Recap (March 15, 2026)

The pace of AI development is no longer measured in months or quarters. It is measured in days. This week alone, we witnessed the release of two frontier-grade models, a geopolitical standoff involving the world’s most advanced LLMs, and a hardware pivot that signals a shift in the global compute...

Weekly AI Command: The Tech Launchpad (March 15, 2026)

The era of AI experimentation has officially closed. We have entered the era of execution.

The Hidden Risks of Vibecoding: Why Your Enterprise Operations Need Verifiable AI Governance

Vibecoding is the latest shift in software development. It feels like magic: prompting an LLM, watching code appear, and seeing a feature go live in minutes. It’s the ultimate "move fast" strategy. But for the modern enterprise, moving fast without a map is just a faster way to hit a wall.

From Vibes to Verifiable: The New Standard for AI Production Readiness with VibeFlow

Most enterprise AI initiatives today aren't failing because the models are unintelligent. They are failing because the execution is built on a foundation of "vibes."

The DNA of Modern AI: Text Encoding and Vector Databases Explained

Every AI application starts with a translation problem.

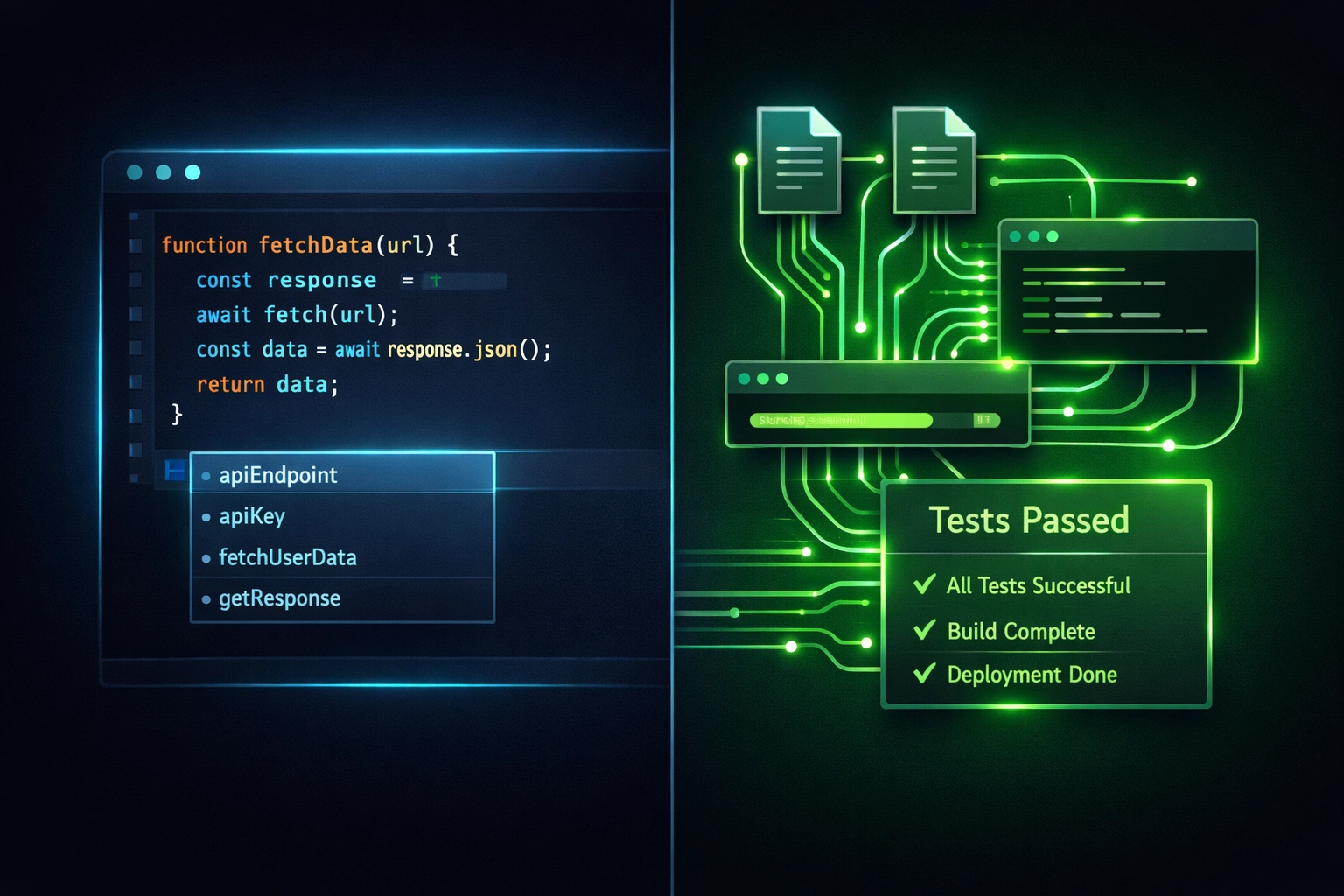

Coding Agents and Shadow AI in Your SDLC: What to Measure

Your developers are shipping faster than ever. That's the good news.

From Cursor to Copilot: The Enterprise Guide to Governing Agentic Coding Tools

Your developers are shipping code written by agents. Not suggested by AI: written, tested, and committed by autonomous systems that navigate your codebase, execute terminal commands, and fix their own bugs.

VibeFlow Framework: Turn Vibe Checks into Verifiable Enterprise AI

We’ve all seen the demos. A developer sits down, types a few sentences into a chat interface, and: magic: a functional dashboard appears. The industry has dubbed this "Vibe Coding." It’s exhilarating, fast, and feels like the future.

DIY is Dead: Why You Need an AI Governance Platform

DIY AI governance breaks at scale. Learn why enterprises need a dedicated platform for centralized visibility, automated policy enforcement, and audit-ready compliance.

The Production Readiness Checklist for Enterprise AI

Not to slow pilots down—just to stop pretending production is a copy/paste step.

AI Governance Platform Vs DIY Policies: Which Is Better For Your Enterprise?

Your enterprise is deploying AI. Fast. The question isn't whether you need governance: it's how you're going to enforce it.

Check Your AI IQ: Part 3 - The Agentic Frontier

Agentic AI is the most powerful and dangerous layer of the modern AI stack. Learn how autonomous agents work, why governance is critical, and how enterprises can control them.

AI Pilots Don't Fail on Intelligence. They Fail on Execution.

95% of enterprise generative AI projects fail to reach production. The technology works. What fails is the governance, integration, and operational infrastructure that turns a demo into a production system.

Check Your AI IQ: Part 2 - The Predictive AI Powerhouse

Predictive AI demands clean, structured, historical data. Without data sovereignty, enterprises get noise, false confidence, and expensive mistakes. Learn where predictive AI delivers real value.

Context: The Secret Life of LLMs

Every conversation with an LLM starts from scratch. Understanding context windows, stateless architecture, memory systems, and context graphs is the key to getting real enterprise value from AI.

Check Your AI IQ: Part 1 - Decoding the Modern AI Stack

Machine learning, generative AI, predictive AI, and agentic AI form the modern enterprise AI stack. Understanding each pillar is the first step to governing them effectively.

Why Enterprises Need AI Governance Now More Than Ever

As AI adoption accelerates, organizations face increasing risks without proper governance frameworks. Learn why AI governance is essential for success.

Introducing AXIOM: Taming the Enterprise AI Chaos

AI adoption is accelerating faster than ever, but so is the chaos it creates. Today, we announce AXIOM - the platform built to bring order to enterprise AI.

Stay Updated

Get the latest insights on enterprise AI governance delivered to your inbox.

Get Started for FREE