Compliance, Governance, and Review Gates: VibeFlow vs Devin vs Linear

When the auditor asks who built it and how, what does each AI development platform actually capture? Gate-by-gate and SOC 2 + NIST AI RMF mapping for VibeFlow, Devin, and Linear.

The single hardest question an AI development platform has to answer is the auditor’s question: who built this, on what authority, and where is the evidence? Marketing pages around AI in software dev rarely engage with this directly, because the answer is structural. What evidence the platform produces is a function of what shape of automation it implements — and the three platforms in this comparison implement three very different shapes.

This article — Series 1, part 2 — picks up the criteria framework from VibeFlow vs Devin vs Linear: An AI-Native Software Development Platform Comparison and goes deep on criterion 3: compliance, governance, and review gates. Article 1C covers integrations and branch management.

What an AI Development Platform Owes a Future Auditor

Before grading the three platforms, fix the bar. An audit of AI-built software production at a regulated enterprise is going to ask, on every change, for at least the following evidence:

- Agent identity and model version — which agent (or which human) produced the diff, on which model, at what version

- The prompt or design intent — the structured input that drove the change

- Independent review evidence — distinct security and QA artifacts, not just “tests passed”

- Human approval trail — who signed off, when, and on the basis of what artifacts

- Control mappings — which framework controls (e.g., SOC 2 Common Criteria, NIST AI RMF Manage subcategories) the change touches and how each is satisfied

The full case for why this is the right list — and how to operationalise it — is in Building an AI Audit Trail. The gate-pipeline that produces these artifacts in practice is in Quality Gates for AI-Generated Code. Read either if any of the five items above feels abstract.

---

title: VibeFlow

---

flowchart LR

V1[Agent identity] --> V2[Prompt + model] --> V3[QA artifact] --> V4[Sec artifact] --> V5[Approval] --> V6[Audit record]---

title: Devin

---

flowchart LR

D1[Agent identity] --> D2[Prompt] --> D3[? external CI] --> D4[? external SAST] --> D5[Human PR approval] --> D6[? buyer-assembled record]---

title: Linear

---

flowchart LR

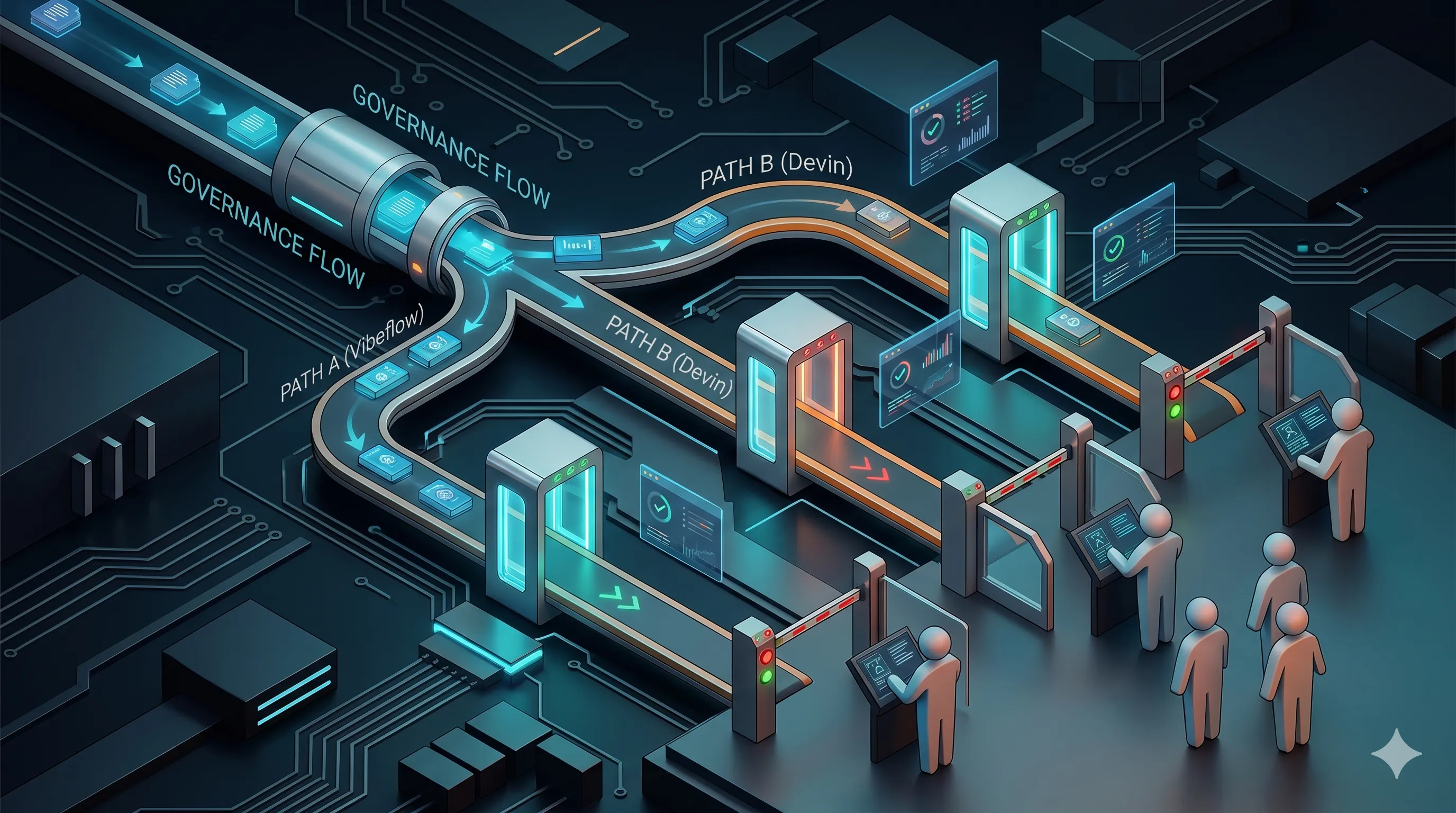

L1[Issue identity] --> L2[Summary] --> L3[? external CI] --> L4[? external SAST] --> L5[Human PR approval] --> L6[? buyer-assembled record]The diagram is the article in one picture. VibeFlow captures all five of those evidence elements as a side-effect of normal flow. Devin and Linear capture some, leave the rest to whatever the buyer assembles around them.

VibeFlow — Gates as First-Class Pipeline Stages

VibeFlow’s workflow encodes the five evidence elements directly. Every work item moves through planning → implementing → done → security_review → qa_verified. Each transition is captured: which agent claimed the item, which model, the structured execution log, the diff, the test artifact, the security finding artifact, the human reviewer who approved each stage. Reviewing the security review or QA verification is itself a separate agent or human action with its own log.

The two named gates are the explicit instances of Gate 2 (security scan) and Gate 4 (compliance verify) from the Quality Gates post. Gate 1 (style/correctness) is enforced by the linter the underlying repo already has; Gate 3 (test coverage) lives in the QA-verification stage. Every gate produces a structured artifact attached to the work item — not a comment in a chat log, not a screenshot.

Compliance-tag attachment is part of the work item. A todo touching authentication code carries SOC 2 CC6 tags; a todo touching logging carries CC7 / NIST AI RMF Measure tags. The control-mapping table at the bottom of this article shows the concrete mapping VibeFlow maintains.

Devin — Autonomous Agent + Human PR Review

Devin’s compliance posture is consistent with its single-autonomous-agent shape. The agent itself records the prompt (the user’s task), the model it ran on, the actions it took in its hosted environment, and the resulting PR. That is real evidence on the authoring side. What it does not attempt is multi-stage review independence — there is no separate “security agent” reviewing Devin’s output, no separate “QA agent” extending its tests. Those reviews are expected to happen in whatever pipeline the buyer’s PR process already runs.

For an enterprise that already has a mature SAST + DAST + SCA + coverage stack on PRs, that may be entirely fine — Devin slots in as the author, the existing pipeline supplies the gates. For an enterprise whose existing pipeline is tests pass = ship, dropping Devin in does not magically add the missing review independence. Cognition’s Devin docs describe what the agent captures; the gate side is intentionally out of scope.

The chain-of-custody question on a Devin PR is therefore: “what does our PR pipeline check?” If the answer is robust, the audit story works. If the answer is thin, adding an autonomous coding agent on top makes the volume problem worse without adding review capacity.

Linear — AI Around the Issue, Not the Code

Linear’s AI features sit on top of the issue tracker. Their AI summaries and triage help PMs and engineers move work faster, but the AI does not author or review the underlying code. That makes Linear’s audit story straightforward: it captures the project-management trail (who filed the issue, who triaged, AI-assisted summaries that fed the discussion). The code-side evidence is supplied entirely by source-control + CI/CD + whatever review pipeline the team has built around the repo.

This is not a weakness so much as a different scope. A team using Linear-with-AI for triage and a separately-governed code pipeline for build/review is in a coherent posture — Linear is honest about what it does and doesn’t claim.

Gate-by-Gate Comparison

The cells below describe what is first-class in each product (out of the box, captured automatically), what is bolt-on (possible but requires the buyer to assemble), and what is out of scope (the product does not own this concern at all).

| Gate | VibeFlow | Devin | Linear |

|---|---|---|---|

| Gate 1 — Style / correctness (lint, format, type) | Bolt-on (uses repo linter) | Bolt-on (uses repo linter) | Out of scope |

| Gate 2 — Security scan (SAST + secrets + deps) | First-class — security_review stage with structured artifact | Bolt-on (CI on the resulting PR) | Out of scope |

| Gate 3 — Test coverage / mutation | First-class — qa_verified stage; can extend tests | Bolt-on (CI on the resulting PR) | Out of scope |

| Gate 4 — Compliance verification | First-class — control tagging + audit record per todo | Bolt-on (buyer assembles around the PR) | Out of scope |

A gate marked first-class produces an artifact in the platform’s own audit record. A bolt-on gate exists only to the extent the buyer’s surrounding pipeline produces it. Out of scope means the product does not address the gate at all (and is not pretending to).

SOC 2 + NIST AI RMF Mapping

Two control frameworks dominate enterprise audit conversations on AI-built code. Below is a concrete mapping per platform — for the items the platform addresses, the column lists the platform’s own surface; for the rest, the cell reads “manual / external.”

| Control / subcategory | VibeFlow | Devin | Linear |

|---|---|---|---|

| SOC 2 CC6.1 (logical access on code paths) | Per-todo agent identity + scope | Manual / external | Manual / external |

| SOC 2 CC7.2 (system monitoring) | Execution log + heartbeat per session | Agent activity log | Issue activity log |

| SOC 2 CC8.1 (change management) | Status flow + commit attribution | PR + commit metadata | Issue ↔ PR linkage |

| SOC 2 CC9.2 (vendor / model risk) | Model version captured per work item | Model version captured | Manual / external |

| NIST AI RMF Govern (1.6: roles) | Persona-typed agent roles | Single agent role | N/A — PM scope |

| NIST AI RMF Manage (4.1: incident response) | Stuck-detector + prompt escalation | Manual / external | Manual / external |

| NIST AI RMF Measure (2.7: post-deployment monitoring) | Post-merge gate (qa_verified) | Manual / external | Manual / external |

The pattern is the same as the gate table: VibeFlow’s cells are filled because the platform’s structure produces those artifacts; Devin and Linear’s cells are filled to the extent the buyer’s surrounding pipeline supplies them. Neither is dishonest — but the buyer’s effort is asymmetric, and a procurement decision should reflect that.

The Buy-vs-Assemble Trade-off

A useful framing for picking between these is to ask: how much of the surrounding evidence pipeline does the buyer already have?

If the buyer has a mature internal pipeline (SAST, DAST, coverage, mutation, audit aggregation, control mapping), Devin or Linear plus that pipeline is a coherent stack. The AI agent or PM augmentation slots into existing infrastructure, the existing infrastructure supplies the evidence.

If the buyer does not have that pipeline — or wants to deprecate parts of it because the gates would be redundant with platform-level gates — VibeFlow’s bet of “the platform IS the pipeline” is the option that drops out. The trade-off is centralisation: more is owned by one product. The win is that the audit story is one product’s story, not seven.

For more on the threat-modelling and CISO-side framing of these decisions, see The CISO’s Guide to AI Agent Security and Enterprise AI Risk Management — Beyond Checkbox Compliance. Compliance leads can read the framework-aligned framing on /compliance/soc-2, /compliance/nist-ai-rmf, and /compliance/iso-27001, and CISOs at /for/cisos.

Compliance Posture Is the Choice

Pick the platform whose compliance evidence model matches what your organisation actually wants to own. If the answer is “as little as possible — give us the evidence record as a side-effect,” VibeFlow’s first-class gate stages are the right shape. If the answer is “we already have a robust evidence pipeline; just plug an autonomous agent or PM augmentation into it,” Devin or Linear with the existing pipeline is coherent. The wrong answer is to pick a platform that does not match the assembly cost the buyer is willing to absorb — that is how AI initiatives stall during the audit phase and not before it.

Next in this series: integration depth and branch management, where the daily-flow trade-offs land. Or: start free with VibeFlow and see the gate-pipeline shape running on your own repo.

Written by

AXIOM Team