Quality Gates for AI-Generated Code: Automated Review and Compliance

AI-generated code slips through human code review at alarming rates. A four-gate pipeline — lint, security, coverage, and compliance — closes the loop before merge.

A pull request lands in your queue. The diff is well-formatted, the variable names read like the rest of the codebase, the function signatures look familiar, and the description is crisp. You approve it in three minutes. The author was a coding agent. The bug it just introduced will surface in production six weeks from now.

This is the failure mode that human-only code review was never designed to catch. AI-generated code is fluent by construction — it mimics your project’s style perfectly because it was trained on it — but fluency is not correctness. Stanford researchers found that developers using AI assistants “wrote significantly less secure code” than those without — and rated their own insecure output as more secure (Perry et al., 2023). GitClear’s analysis of millions of GitHub commits shows “downward pressure on code quality” as Copilot adoption climbs. The volume problem is real, but the deeper problem is that the artifact looks right.

Quality gates are how you stop trusting appearances and start trusting evidence.

Why Human Review Alone Is Insufficient

Code review evolved as a discipline when humans wrote every line. The reviewer’s job was to catch design drift, missed edge cases, and the occasional copy-paste error. The implicit assumption was that the author had thought about the change for hours, so the reviewer’s job was to add a second pair of eyes — not to recompute the work from scratch.

AI-generated code breaks every part of that assumption.

- The author did not think about the change for hours. The agent generated it in seconds. Hidden assumptions, edge cases, and threat models were not enumerated — they were sampled from a distribution.

- Style is no longer a quality signal. A clean diff used to imply a careful author. Now it implies a competent autocomplete.

- Volume scales with no friction. A team of ten engineers using coding agents can submit the daily diff volume of a team of fifty. Review fatigue is a measured failure mode in human review pipelines, and it gets monotonically worse as throughput rises.

- Reviewers default to trust. When the diff compiles, passes existing tests, and looks idiomatic, the path of least resistance is approval. This is the same dynamic that produces the audit and chain-of-custody gaps explored in The Hidden Risks of Vibecoding.

The fix is not to demand more careful humans. It is to push every claim a reviewer would otherwise have to take on faith into a machine-checkable gate that runs before the human ever opens the diff.

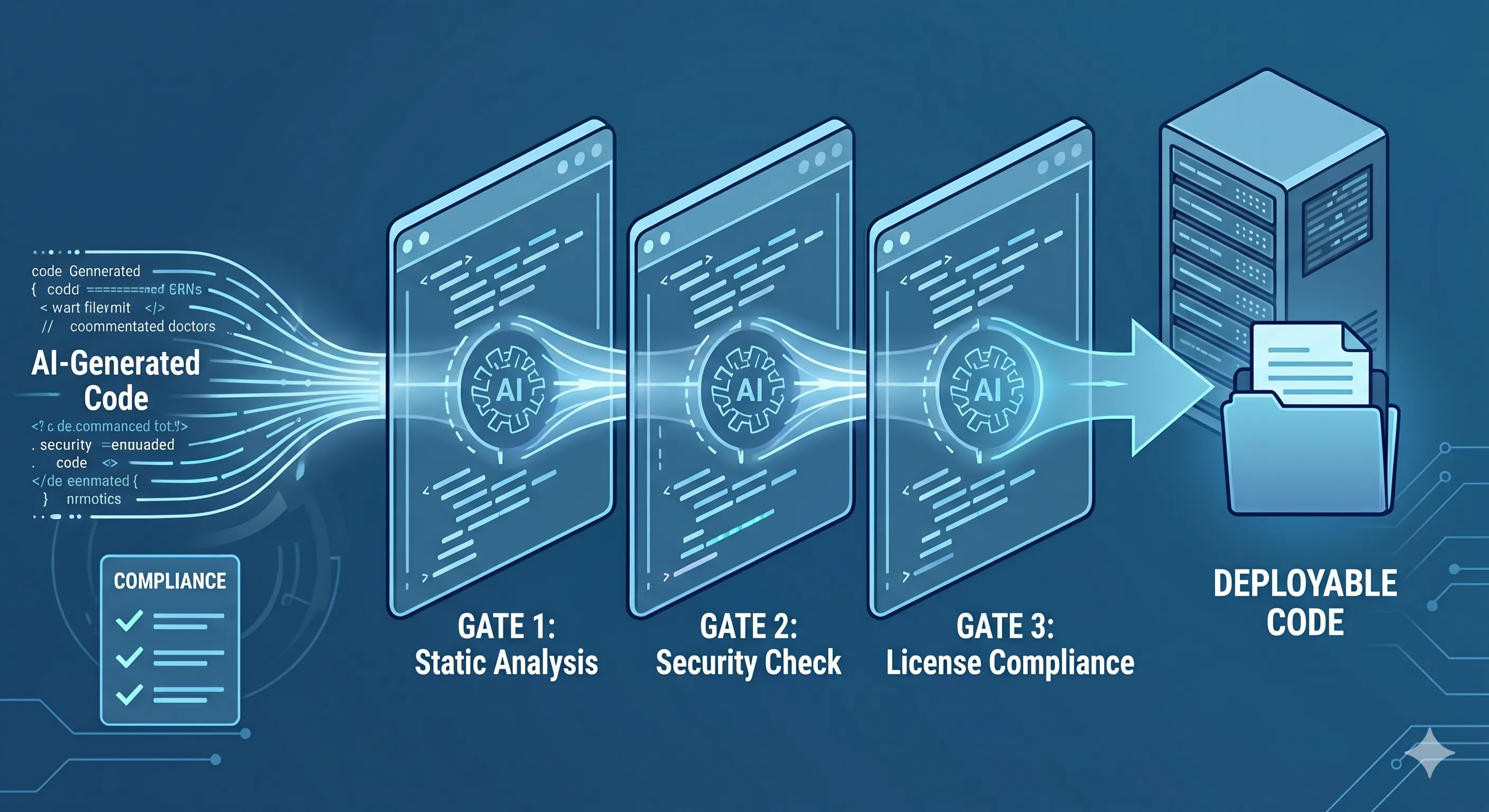

The Four Quality Gates Every AI-Generated Commit Needs

A quality-gate pipeline for AI-generated code has four mandatory checkpoints. Each one converts a category of human-review burden into deterministic CI evidence.

flowchart LR

A[Agent commit] --> G1[Gate 1<br/>Style & Correctness]

G1 -->|pass| G2[Gate 2<br/>Security Scan]

G1 -->|fail| R[Reject /<br/>Auto-fix loop]

G2 -->|pass| G3[Gate 3<br/>Test Coverage]

G2 -->|fail| R

G3 -->|pass| G4[Gate 4<br/>Compliance Verify]

G3 -->|fail| R

G4 -->|pass| H[Human review<br/>+ merge]

G4 -->|fail| RThe gates are ordered by cost. Lint and type checks run in seconds; compliance verification touches policy stores and may take minutes. Failing fast in the cheap gates keeps the pipeline responsive even as the slow gates deepen.

| Concern | Human-only review | Four-gate pipeline |

|---|---|---|

| Style drift | Reviewer reads diff line-by-line | Linter fails build |

| Vulnerable patterns | Reviewer recognizes some classes | SAST scans 100% of diff |

| Untested branches | Reviewer hopes tests exist | Coverage gate enforces threshold |

| Audit-trail completeness | Reviewer assumes it’s there | Policy gate verifies |

Gate 1: Automated Style and Correctness

The first gate catches the cheapest problems: syntax errors, type errors, formatting drift, and rule violations. These are the failures that take seconds to detect and minutes to debate in a PR thread.

Run, in order, on every diff produced by an agent:

- Formatter — Prettier, Biome,

gofmt,rustfmt,black. Format-on-commit so style is never a reviewer concern. - Linter with project rules — ESLint, Ruff,

golangci-lint. Treat any new warning as a build failure. AI agents will happily introduceeslint-disable-next-linecomments to silence rules; configure the linter to fail on those too. - Type checker in strict mode — TypeScript’s

strict: true,mypy --strict, Go’s compiler. Strict mode catches the implicit-any and untyped-dict patterns that LLMs default to when they aren’t sure of a contract. - Build — the change must produce a clean build with no new warnings.

The discipline that makes this gate effective for AI-generated code is zero-tolerance configuration. Humans can earn warning suppressions by explaining the trade-off in review. Agents cannot. Every suppression in an AI-generated diff should require a human-authored override commit before the gate passes.

Gate 2: Security Scanning Adapted for AI-Generated Patterns

Generic SAST is necessary but not sufficient. AI agents produce a recognizable distribution of weaknesses — string-concatenated SQL when the surrounding code uses parameterized queries, hardcoded secrets that “look” like placeholders, prompt-injection-vulnerable system prompts, and overly permissive error handling. The OWASP Top 10 for LLM Applications is now the canonical reference for the AI-specific failure classes.

Stack the scanners:

- SAST — Semgrep and CodeQL for taint analysis on the changed code. Both support custom rules; write rules for your project’s authentication boundary so AI-generated handlers can’t quietly bypass it.

- Dependency scan — OSV-Scanner or equivalent on every

package.json/go.mod/requirements.txtdiff. Agents will pull in transitive dependencies to satisfy a prompt; the gate must enforce your dependency policy. - Secret detection — Gitleaks or TruffleHog on the staged diff. Block before commit, not after push.

- Prompt and configuration scanning — for any file containing system prompts or agent configuration, scan for direct prompt injection markers and untrusted-input concatenation.

The threat-modeling lens for this gate is laid out in detail in our CISO’s Guide to AI Agent Security. The goal here is not zero risk; it is evidence of scan, attached to the commit, that satisfies your control framework.

Gate 3: Test Coverage Enforcement

A passing test suite is not the same as a tested change. AI agents are very good at writing the test that proves their own code works on the inputs they thought of. They are notoriously weak at adversarial cases, boundary conditions, and concurrency hazards.

Three sub-gates close that loop.

- Diff coverage threshold. Don’t require 80% line coverage on the whole repo — require it on the changed lines. Tools like

diff-covermake this trivial. A change that lowers the diff-line coverage of the PR fails the gate. - Mutation testing on critical modules. pitest for the JVM, Stryker for JavaScript,

mutmutfor Python. Mutation testing measures whether tests would catch a bug, not whether the line was executed. For AI-generated tests, this is the difference between a real assertion and a tautology. - Property-based tests for value-handling code. Tools like Hypothesis and fast-check generate adversarial inputs. Require at least one property test for any function that parses, validates, or transforms external data.

The bar is not “tests exist.” The bar is “tests would have caught a regression.” Mutation testing is what makes that statement falsifiable.

Gate 4: Compliance Verification

The first three gates verify the code. The fourth gate verifies the record of the code — the artifact a future auditor will read. This is the gate that AI-generated commits skip most often, because the agent does not know what your control framework requires.

Compliance verification is concrete:

- Framework tagging — every change must carry tags that map it to control families. A change to authentication code is tagged against SOC 2 CC6; a change to a logging pipeline is tagged against AU controls and the NIST AI RMF Measure function. Untagged changes to control-relevant code fail the gate.

- Audit-trail completeness — the gate verifies that the PR carries the prompt that produced the diff, the agent identity, the model version, and the human approver. Missing fields fail the gate. The argument for collecting all of this is laid out in Building an AI Audit Trail.

- Policy adherence checks — codify the rules an auditor will ask about. “PII never leaves the EU region.” “No new external network calls without an approved threat model.” “All cryptographic primitives use the approved library.” Express these as policy-as-code (OPA / Conftest / Cedar) and make the gate fail on violation.

- Regulated-region overlays — if your product ships in jurisdictions covered by the EU AI Act or sectoral US frameworks, the gate adds the relevant overlay before approving the merge.

The output of this gate is a control-framework-aligned audit record that downstream attestation tooling can consume directly — see /compliance/soc-2 and /compliance/nist-ai-rmf for the mappings Axiom maintains.

Implementing Gates Without Slowing the Team Down

The most common objection to a four-gate pipeline is latency. If every PR waits ten minutes for compliance verification, throughput collapses and developers route around the gate. Three patterns keep the gate fast.

sequenceDiagram

participant A as Agent

participant CI as CI Runner

participant G1 as Gate 1 (lint)

participant G2 as Gate 2 (sec)

participant G3 as Gate 3 (cov)

participant G4 as Gate 4 (policy)

A->>CI: push commit

CI->>G1: run (10s)

par Parallel slow gates

CI->>G2: run (60s)

CI->>G3: run (90s)

CI->>G4: run (45s)

end

G2-->>CI: ok

G3-->>CI: ok

G4-->>CI: ok

CI-->>A: status: pass- Parallelize the slow gates. Lint runs first because it’s a cheap correctness filter. Once it passes, the security, coverage, and compliance gates run in parallel — total wall time is the slowest single gate, not the sum.

- Risk-tier the gate intensity. A tweak to a marketing landing page does not need mutation testing. A change to authentication code does. Use a path-based ruleset that scales gate intensity with blast radius — engineering leaders can read more on the staffing implications at /for/engineering-leaders.

- Make the gate’s verdict actionable. When Gate 2 fails, don’t just say “security scan failed.” Show the specific finding, the line, and a one-line remediation. Coding agents can be looped back on a structured failure message and will fix most issues without human involvement, restoring throughput.

The throughput failure mode is not “the gate is too strict.” It is “the gate’s failure messages are not actionable.” Treat that as a first-class engineering problem.

How VibeFlow Implements the Gate Pipeline

VibeFlow ships these four gates as the default workflow for any change produced by a coding agent. Every work item moves through implementing → done → security_review → qa_verified — the security and QA stages are the explicit, named instances of Gates 2 and 4. The audit record is captured automatically: which agent produced the diff, which model, which prompt, which human approved, which controls were touched. The control-framework mappings drop out of that record at attestation time without a separate evidence-collection effort.

You can read more about the platform on the VibeFlow product page, and see how the Axiom Studio team uses gates internally on /for/engineering-leaders and /for/cisos.

The Bar for Shipping AI-Generated Code

Human-only review was the right discipline for human-only code. AI-generated code is faster, more fluent, and more confident-sounding than the artifact code review evolved to evaluate — and that is exactly why it slips through. A four-gate pipeline is not a tax on agent productivity; it is the precondition for letting agents merge at all. The teams that adopt it ship faster and defend their audit posture, because every claim a reviewer used to take on faith is now backed by machine evidence.

Start with Gate 1 this sprint. Add Gate 2 next. Build the audit-trail record incrementally. By the time the regulators or the auditors arrive, the evidence is already there.

Ready to instrument the pipeline? Try VibeFlow — or talk to us about wiring quality gates into your existing CI from the product page.

Written by

AXIOM Team