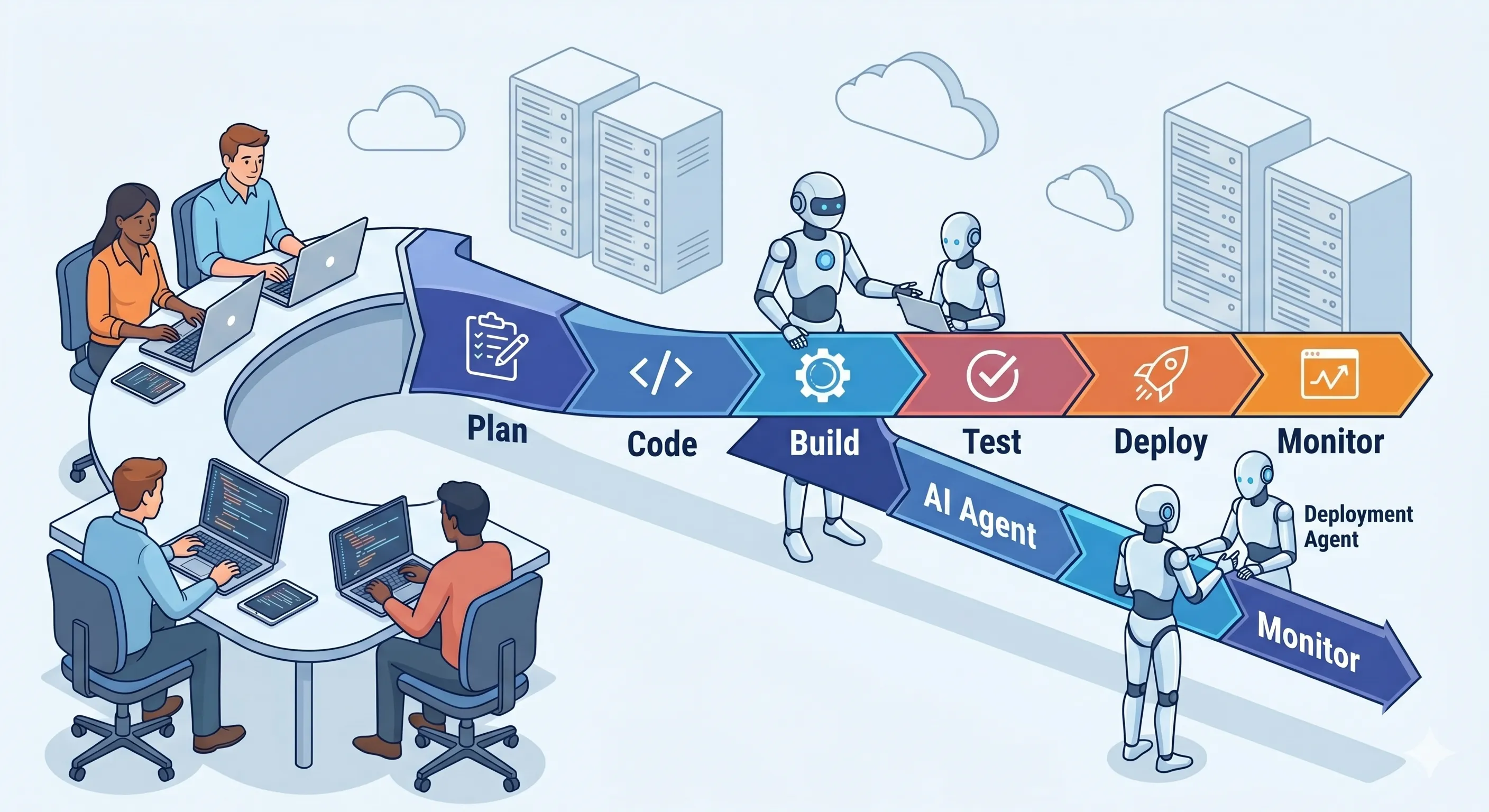

Integrating AI Agents into Your Existing DevOps Pipeline

You don't need to replace GitHub Actions, Jenkins, or your Jira workflow to adopt coding agents. Here's where agents plug in — and where the governance layer has to live.

The fastest way to slow down an AI agent rollout is to pitch it as a replacement for your existing DevOps pipeline. Engineers who have spent years tuning GitHub Actions runners, Jenkins shared libraries, or GitLab CI templates do not want to rebuild that work to onboard a coding agent — and they shouldn’t have to. Agents are most effective when they extend the pipeline you already trust, plugging into stages that already exist and emitting the same kinds of artifacts your existing tools already consume.

This is a practical guide to where agents fit, the three highest-leverage integration patterns, and the one piece you cannot skip: the governance layer that sits between your agents and your pipeline.

The Integration Landscape

Your pipeline already has natural integration points. Agents are not a new shape of automation — they are a new participant at points that automation already exists.

flowchart LR

A[Issue tracker<br/>Jira / Linear] --> B[Local commit<br/>+ pre-commit hooks]

B --> C[Push / PR<br/>GitHub / GitLab]

C --> D[CI run<br/>Actions / GitLab CI / Jenkins]

D --> E[Code review<br/>+ approvals]

E --> F[Deploy gates<br/>+ progressive rollout]

F --> G[Production<br/>+ observability]

A2[Implementation Agent] -.-> B

A3[Review Agent] -.-> E

A4[Test-Gen Agent] -.-> D

A5[Release Agent] -.-> F| Stage | Agent integration | Existing tool you keep |

|---|---|---|

| Issue → branch | Implementation agent picks up labeled tickets | Jira, Linear, GitHub Issues |

| Pre-commit | Review/lint agent on staged diff | husky, pre-commit, lefthook |

| PR opened | Code-review agent posts findings | GitHub PR API, GitLab MR API |

| CI run | Test-gen agent expands coverage | GitHub Actions, GitLab CI, Jenkins, CircleCI |

| Deploy gate | Risk-scoring agent annotates release | Argo Rollouts, Spinnaker, Octopus |

| Post-deploy | Incident-summary agent on alert fan-in | PagerDuty, Datadog, Sentry |

The pattern is the same in every row: the humans and the primary tool keep their jobs. The agent runs in parallel, posts a typed artifact (a comment, a check, a label, a JSON file), and the existing pipeline consumes it. This is the “extend, don’t replace” stance from the GitHub Actions docs on third-party tools — and it’s the only way to onboard agents without forcing a platform rewrite.

Pattern 1 — Agent-Assisted PR Review

The highest-leverage integration is the one most teams reach for first: an AI agent reviews every PR alongside human reviewers and posts structured findings.

The plumbing is concrete. A GitHub Action triggers on pull_request events and invokes the review agent over your model gateway; the agent reads the diff, posts inline comments via the GitHub Review API, and emits a check-run with a pass/fail status. GitLab and Bitbucket equivalents exist via their own APIs.

The discipline that makes this pattern useful rather than annoying:

- Comment on lines, not on PRs. A 300-word general critique is noise; an inline comment on line 47 with a one-line remediation is signal.

- Treat the agent’s check as advisory by default. Until the false-positive rate is below ~10% on your codebase, do not block merges on the agent’s verdict. Track agreement rates between agent and human and ratchet the gate up.

- Suppress repeat findings. If the agent flagged a pattern on PR #4001 and the human accepted “won’t fix” with a reason, the agent should not flag the same pattern on PR #4002. Persist a rationale store keyed by file path and rule.

For why fluent diffs are dangerous and which finding classes matter most, see Quality Gates for AI-Generated Code.

Pattern 2 — Agent-Powered Implementation

Agents that pick up tickets and produce PRs require deeper integration but pay off with the largest delta on velocity.

Wire it from the issue tracker side: a label like agent-ready on a Jira issue triggers a workflow that gives an implementation agent the issue body, the relevant context, and a target branch. The agent produces commits, pushes a draft PR, and assigns a human as primary reviewer. The agent’s status reads back to the ticket via the same Jira webhooks your existing automation already uses.

The two non-obvious failure modes:

- The ticket is the prompt. If the issue description is sparse, the agent’s output will be sparse — and human reviewers will spend their review budget extracting requirements they should have read in the description. Invest in ticket templates as much as in the agent.

- Don’t merge from agents directly. Even when the agent is right, a human in the loop on merge maintains the audit chain that compliance frameworks require. The agent gets to push; the human gets to merge.

This pattern composes naturally with the workflow shape we walked through in Agent Workflows in Enterprise Software Development — the implementation agent is one role in a larger graph that includes a separate review agent and security agent.

Pattern 3 — Agent-Driven Test Generation

Test generation is the integration with the lowest blast radius and one of the most measurable ROIs. The agent runs as a CI step that examines the diff, generates additional tests for uncovered branches, and either commits them back or posts them as a suggestion.

The CI shape: a job named expand-coverage runs after your existing test job; it computes diff coverage with a tool like diff-cover, feeds the uncovered ranges to the agent, and the agent emits test files. Run those tests; if they pass and add coverage, commit them. If they fail or duplicate existing coverage, discard.

Two invariants make this pattern safe:

- Generated tests must increase mutation score, not just line count. Tools like Stryker and pitest tell you whether the generated tests would actually catch a regression. Require a minimum mutation-score delta or reject the agent’s output.

- Generated tests are second-class until reviewed. Mark them with a directory or tag (

tests/_generated/) so a human can audit them before they become load-bearing.

The Governance Layer Is Not Optional

Three patterns above are tactical wins. They become an operational risk the moment you connect agents to multiple repos, multiple models, and multiple teams without a layer that does three things: track every call, enforce policy on every call, and bound cost on every call.

flowchart LR

P[Pipeline stages] --> G[Governance Gateway]

G -->|authn / authz| A[Agents]

G -->|policy as code| Pol[OPA / Cedar]

G -->|audit log| Ev[Evidence store]

G -->|per-tenant quota| Q[Quota service]

A -->|model calls| M[LLM Gateway]

A -->|tool calls| T[MCP Gateway]The governance layer sits in the path of every agent call. It is where you:

- attach a verifiable identity to every agent action so the audit trail survives an SOC 2 or NIST AI RMF review;

- enforce policy-as-code (OPA, Cedar) on what agents can do — what files they can write, which environments they can deploy to, which models they can use;

- meter token spend per repo and per tenant so a misconfigured agent loop can’t drain a budget overnight.

This is the LLM Gateway and MCP Gateway shape: stateless infrastructure between your pipeline and your model/tool calls. Without it, every team builds its own ad-hoc telemetry, every audit becomes a forensics exercise, and a single compromised credential becomes a much larger problem than it had to be.

Common Pitfalls

- Over-automation. The first impulse is to put an agent in every stage. The second impulse — once the false positives, retries, and runaway loops surface — is to delete most of them. Start with one pattern, prove it, expand.

- Insufficient review gates. “The agent is conservative; it won’t merge anything bad” is a position your auditor will not accept. Keep humans on merge until you have a year of data to argue otherwise.

- Cost surprises. Token costs scale with diff size, context size, and retry rate. The pattern that looked cheap in pilot is the one that bankrupts the budget at production scale. Meter spend at the gateway from day one.

- Context leakage. An agent answering a code-review question on a private repo using a public model API has just sent that code to a third party. Choose models, gateways, and data-residency settings deliberately — this is the threat model from The CISO’s Guide to AI Agent Security, made operational.

Axiom’s Integration Approach

VibeFlow is the agent runtime that picks up tickets and produces PRs through your existing Git provider. Underneath, the LLM Gateway handles model routing, auth, and cost controls; the MCP Gateway brokers tool access. The combination drops in alongside GitHub Actions / GitLab CI / Jenkins — the agent calls travel through the gateways instead of around them. Platform teams operating this in production should read /for/platform-teams; engineering managers driving the rollout will find the operational model at /for/engineering-managers.

The integration choice is not “agents or our pipeline.” It’s “agents plus our pipeline, with one governance layer between them.” Once that layer is in place, every additional agent costs hours, not weeks.

Ready to extend your pipeline? Start with VibeFlow, pair it with the LLM Gateway and MCP Gateway, and start free.

Written by

AXIOM Team