Weekly AI Command: The Tech Launchpad (Week Ending April 4, 2026)

The first week of April 2026 has marked a definitive shift in the equilibrium of the AI industry. The tension between open-source accessibility and proprietary dominance has reached a boiling point. We are seeing a divergence: high-performance multimodal models are becoming available for local ex...

The first week of April 2026 has marked a definitive shift in the equilibrium of the AI industry. The tension between open-source accessibility and proprietary dominance has reached a boiling point. We are seeing a divergence: high-performance multimodal models are becoming available for local execution, while major providers are simultaneously tightening their ecosystems to protect their moats.

For the enterprise, the message is clear. Sovereignty is no longer a luxury: it is a requirement for survival. This week, we saw Google break open the multimodal market, Microsoft signal a major internal pivot, and AMD announce the silicon necessary to bring orchestration back behind the firewall.

Google Gemma 4: The Open-Source Multimodal Breakthrough

Google has officially released Gemma 4, and it is a massive departure from previous iterations. Built from the same research and technology as the flagship Gemini 3 models, Gemma 4 is released under the Apache 2.0 license, providing the enterprise with a level of flexibility that was previously reserved for text-only models.

Gemma 4 is natively multimodal. All models process text, images, and video, while the smaller edge variants (E2B and E4B) also handle native audio input for speech recognition and understanding. This isn’t just about understanding content; it’s about the era of agentic AI development. The model family is specifically designed for agentic workflows, with native support for function calling, structured JSON output, and multi-step planning.

The release covers four sizes: E2B (effective 2B parameters), E4B (effective 4B parameters), a 26B Mixture-of-Experts model (Gemma’s first MoE architecture, with only ~4B active parameters at inference), and the 31B Dense model. The 31B model is the standout for professional use, currently ranking as the #3 open model on the Arena AI text leaderboard. It provides a level of reasoning that rivals last year’s closed-source giants, yet it is small enough to be deployed on-premise. This enables organizations to maintain strict AI compliance while leveraging the power of advanced vision and text analysis. Note that the 31B and 26B models support vision and video input but do not include native audio processing — audio is exclusive to the edge models.

Microsoft’s MAI Pivot: The Shift Toward Proprietary Foundational Models

Microsoft is no longer content being the world’s most famous OpenAI implementation partner. This week, we saw the public preview of the MAI (Microsoft Artificial Intelligence) Models, developed in-house by the MAI Superintelligence team led by Mustafa Suleyman:

- MAI-Transcribe-1: A first-generation speech recognition model delivering enterprise-grade accuracy across 25 languages at approximately 50% lower GPU cost than leading alternatives.

- MAI-Voice-1: A high-fidelity speech generation model capable of producing 60 seconds of expressive audio in under one second on a single GPU, with emotional nuance and speaker identity preservation.

- MAI-Image-2: A generative image model that debuted as a top 3 model family on the Arena.ai leaderboard, now being rolled out in Bing and PowerPoint.

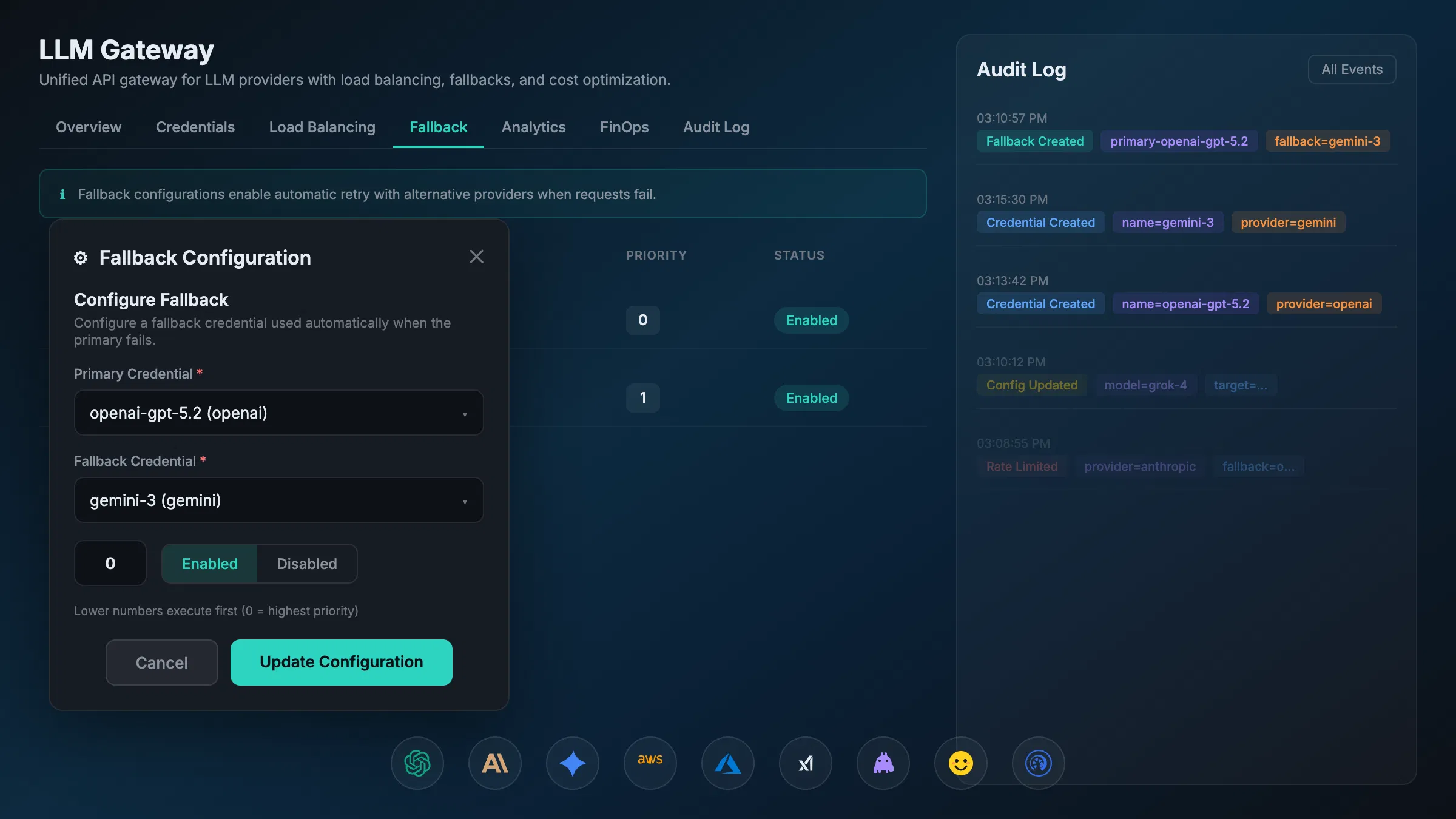

These models are already powering Microsoft’s own products, including Copilot, Bing, and Azure Speech. This shift signals a strategic move toward vertical integration. Microsoft is building its own foundational stack to reduce its long-term dependency on external providers, including OpenAI. For businesses, this adds a layer of complexity to model selection. As providers retreat into their own ecosystems, the need for a unified LLM Gateway becomes paramount.

Enterprises cannot afford to be locked into a single provider’s roadmap. The MAI release proves that even the largest players are diversifying their internal stacks, and businesses must do the same to ensure operational resilience.

The Anthropic Claude Code Leak and OpenClaw Billing Change

The security community was rocked this week by an accidental source code exposure involving Claude Code, Anthropic’s popular AI coding agent. On March 31, a debugging source map file was accidentally bundled into version 2.1.88 of the Claude Code npm package. The file pointed to a zip archive on Anthropic’s own cloud storage containing the full source code for Claude Code’s agentic harness — nearly 2,000 TypeScript files and over 500,000 lines of code. Within hours, the codebase was mirrored and dissected across GitHub, amassing tens of thousands of forks.

It is important to note what was and was not leaked. The exposure involved the software harness that wraps the underlying Claude model — the orchestration logic for tools, permissions, memory management, and multi-agent workflows. It did not include the underlying model weights or training data. No customer data or credentials were exposed. Anthropic confirmed the incident was a “release packaging issue caused by human error, not a security breach.”

In a separate development, Anthropic announced major changes to its subscription billing. Starting April 4, 2026, Claude Pro and Max subscriptions will no longer cover usage through third-party tools like OpenClaw, the popular open-source AI agent platform. Users can still use OpenClaw with their Claude login, but usage will now require “extra usage” pay-as-you-go billing or a separate API key. Anthropic cited the strain such third-party usage was placing on compute resources. OpenClaw creator Peter Steinberger, who recently joined OpenAI, said he tried to delay the move. Anthropic is offering subscribers a one-time credit and discounted usage bundles to ease the transition.

This billing change is a warning sign for enterprise leaders. When a provider restricts how third-party tools access their models, it creates immediate friction for existing workflows. This is why we emphasize AI security and the implementation of managed gateways. If you rely on a single model’s proprietary interface, you are at the mercy of their business decisions.

AMD Ryzen 9 9950X3D2: Expanding Desktop Compute Headroom

Compute location is the new frontier of corporate sovereignty. AMD has stepped up with the announcement of the Ryzen 9 9950X3D2 Dual Edition, set to launch on April 22, 2026. This is the world’s first consumer desktop CPU with dual 3D V-Cache — both chiplets feature AMD’s 2nd Gen 3D V-Cache technology, delivering a massive 192MB of L3 cache (208MB total cache) across 16 cores and 32 threads.

The processor runs at a base clock of 4.3 GHz with a boost clock of 5.6 GHz and a TDP of 200W. AMD is positioning the chip for creators and developers who demand performance without compromise, bridging the gap between gaming and HEDT (high-end desktop) workloads with 5-13% performance gains over the existing 9950X3D in complex workflows.

For teams exploring local AI workloads, the broader trend this chip represents is significant. As on-device and on-premise inference becomes increasingly viable with quantized open models, high-cache, high-core-count desktop processors will play a growing role in developer workflows. By running models locally, companies eliminate the data privacy risks associated with sending sensitive intellectual property to third-party servers. It also mitigates the cost spikes associated with high-volume API calls. For teams looking into AI FinOps, the transition to high-performance local hardware is an increasingly effective way to cap long-term operational expenses.

DeepMind: Automated Algorithm Discovery with AlphaEvolve

Perhaps the most technically provocative news of the week comes from Google DeepMind. Researchers applied AlphaEvolve, their LLM-powered evolutionary coding agent, to the domain of multi-agent reinforcement learning in imperfect-information games like poker. The system was tasked with discovering new algorithm variants for two established paradigms — Counterfactual Regret Minimization (CFR) and Policy Space Response Oracles (PSRO) — and in both cases, it discovered new variants that perform competitively against or better than existing hand-designed state-of-the-art baselines.

This is not an LLM rewriting its own internal logic. Rather, it is an LLM being used as a search operator within an evolutionary framework to explore the space of possible algorithms, iteratively generating and refining code based on feedback. The system found more efficient mathematical pathways than its human-designed predecessors — a powerful demonstration of AI-assisted algorithm discovery.

This brings the concept of AI observability to the forefront. As AI systems are increasingly used to discover or optimize algorithms that govern other AI systems, the human ability to audit those decisions becomes strained. Enterprises must have the infrastructure in place to monitor and validate AI-generated improvements to ensure they remain aligned with business objectives and ethical standards.

The AXIOM Perspective: Chaos and Control

We have seen this pattern before in the early days of cloud computing and the rise of mobile OS. A period of rapid, chaotic innovation is always followed by a consolidation of power.

The releases this week: Gemma 4, MAI, and the hardware to run them: represent a massive increase in the power available to the enterprise. However, power without control leads to chaos. The Claude Code leak and the sudden shift in subscription billing remind us that the platform providers will always prioritize their own stability over your flexibility.

At AXIOM Studio, we believe the solution is an orchestration layer that sits between the enterprise and the model. Whether you are using our MCP Gateway to manage model context or the A2A Gateway to handle agent-to-agent communication, the goal is the same: absolute visibility and control.

Key Takeaways for the Week:

- Open Source is catching up: Gemma 4 makes high-quality multimodal agents accessible to anyone, now under a fully permissive Apache 2.0 license with four model sizes from edge to enterprise.

- Diversification is mandatory: Microsoft’s move to in-house MAI models means the LLM landscape is becoming more fragmented, not less.

- Platform risk is real: Anthropic’s Claude Code packaging error exposed its full agent harness, and the OpenClaw billing change shows providers will protect their own capacity over ecosystem flexibility.

- Hardware is the enabler: AMD’s dual 3D V-Cache architecture pushes desktop compute further into workstation territory, supporting the move toward local-first development workflows.

Execution in 2026 is about speed, but governance is what makes that speed sustainable. Don’t let shadow AI dictate your company’s future. Build on a foundation of managed, governed, and visible AI.

Ready to take control of your AI stack? Explore how our AI Gateway can stabilize your enterprise workflows in a rapidly shifting market.

Frequently Asked Questions

What is AI governance? AI governance refers to the frameworks, policies, and practices that organizations implement to ensure AI systems are developed and used responsibly, ethically, and in compliance with regulations.

Why is this important for enterprises? Enterprises face unique challenges with AI adoption including regulatory compliance, data security, shadow AI proliferation, and the need to demonstrate ROI. Proper AI governance addresses all these concerns.

How does this relate to AI regulations? With regulations like the EU AI Act coming into effect, organizations need comprehensive AI governance to ensure compliance, maintain audit trails, and demonstrate responsible AI usage.

What are the security implications? AI systems can introduce security risks including data leakage, unauthorized access, and potential misuse. Proper governance ensures security controls are in place across all AI deployments.

How can I learn more about implementing this? Request early access to AXIOM to see how our platform can help your organization implement enterprise-grade AI governance with complete visibility, control, and compliance.

Written by

AXIOM Team