Weekly AI Command: The Tech Launchpad (March 15, 2026)

The era of AI experimentation has officially closed. We have entered the era of execution.

The era of AI experimentation has officially closed. We have entered the era of execution.

This past week, the industry moved past the novelty of chat interfaces and pivoted toward autonomous, high-frequency execution. The announcements between March 8 and March 15 represent a fundamental shift: AI is no longer just “thinking”; it is doing. For the enterprise, the timeline for deployment has shrunk from months to days. However, as the velocity of these launches increases, the gap between “working in a lab” and “production-ready” widens.

At AXIOM Studio, we track these shifts not to admire the tech, but to govern it. Here is the breakdown of the tech that defined the week.

NVIDIA Nemotron 3 Super: The Agentic Backbone

NVIDIA didn’t just release a model; they released a specialized engine for the agentic era. The Nemotron 3 Super utilizes a sophisticated hybrid architecture: combining Mamba and Transformer layers within a Mixture of Experts (MoE) framework.

This isn’t just technical trivia. The Mamba-Transformer hybrid solves the quadratic scaling issue that plagues traditional LLMs. By integrating a 1-million-token context window, NVIDIA has built a model capable of maintaining “state” across massive enterprise datasets without the latency spikes usually associated with large-scale processing.

For the enterprise, Nemotron 3 Super is designed specifically for agentic focus. It excels at long-horizon reasoning: tasks that require an AI to plan 50 steps ahead without losing the original objective. When your agents need to navigate complex ERP systems or multi-layered supply chain data, this is the architecture that prevents the “forgetting” problem.

OpenAI GPT-5.4: The Computer-Operable Frontier

Hard on the heels of the 5.3 release, OpenAI launched GPT-5.4 “Thinking.” While previous iterations focused on conversational fluidity, 5.4 is a specialized reasoning model. The headline feature is its native computer-operable functions.

We are moving away from agents that interact via API and toward agents that interact via UI. GPT-5.4 can view a screen, understand spatial elements, and execute clicks and keystrokes with precision. This model treats a computer interface the same way a human does: as a visual workspace.

OpenAI also pushed the context window to 1 million tokens in the API, matching the current industry standard. This allows for massive document ingestion, but the real power lies in the “Thinking” mode. The model performs internal chain-of-thought verification before providing an output, significantly reducing the hallucinations that previously made autonomous computer use too risky for production.

Databricks Genie Code: Agentic Data Engineering

Databricks is attacking the bottleneck of data engineering with Genie Code. This isn’t a simple text-to-SQL tool. Genie Code is a full-stack agentic data engineer. It can autonomously write, test, and deploy data pipelines.

In many organizations, the path from “raw data” to “AI-ready data” takes weeks. Genie Code reduces this to minutes. It understands schema relationships, identifies data quality issues, and suggests optimizations for Spark jobs. This launch signals the end of manual ETL (Extract, Transform, Load) processes for standard data tasks.

However, delegating code execution to an agent requires an A2A (Agent-to-Agent) Gateway to ensure that these autonomous scripts don’t bypass security protocols or create runaway cloud costs.

Microsoft AgentRx: Systematic Debugging for the Autonomous Fleet

As enterprises deploy hundreds of agents, the primary challenge shifts from development to maintenance. Microsoft’s AgentRx is a systematic debugging framework designed specifically for agentic workflows.

When an agent fails, it’s rarely a simple syntax error. It’s usually a logic loop or a tool-calling failure. AgentRx provides a “black box” flight recorder for agents, allowing developers to replay the agent’s thought process, identify the point of failure, and apply a patch across the entire fleet.

This is a critical component for agentic AI development. You cannot run a production environment if you cannot audit why an agent made a specific decision. AgentRx provides that visibility.

The Memory Layer: Persistent Identity for AI

Two major launches this week addressed the “amnesia” problem in AI: Memori Labs and Zilliz Memsearch.

Traditional LLMs start every session with a blank slate. Even with long context windows, “long-term memory” has been simulated through RAG (Retrieval-Augmented Generation). These new persistent memory launches allow agents to maintain a continuous identity and history across months of operation.

- Memori Labs focuses on “Relationship Memory,” allowing an agent to remember user preferences, past project nuances, and specific organizational jargon.

- Zilliz Memsearch optimizes the vector database layer to allow for near-instant retrieval of “memories” across trillions of data points.

Without persistent memory, agents are just temporary scripts. With it, they become long-term digital employees.

Google Gemini 3.1 Flash-Lite: Intelligence at the Perimeter

While others went big, Google went small and fast. Gemini 3.1 Flash-Lite is designed for edge AI. This model is optimized for sub-100ms latency on local devices.

In an enterprise context, not every task needs a massive frontier model. Flash-Lite is the “worker bee” model. It handles local data classification, real-time translation, and basic form filling without ever sending data to the cloud. This is a massive win for AI security and latency-sensitive applications like manufacturing floor monitoring or retail point-of-sale systems.

The Execution Gap: From Chaos to Control

The announcements from this week represent a staggering amount of power. But for the enterprise, power without control is chaos.

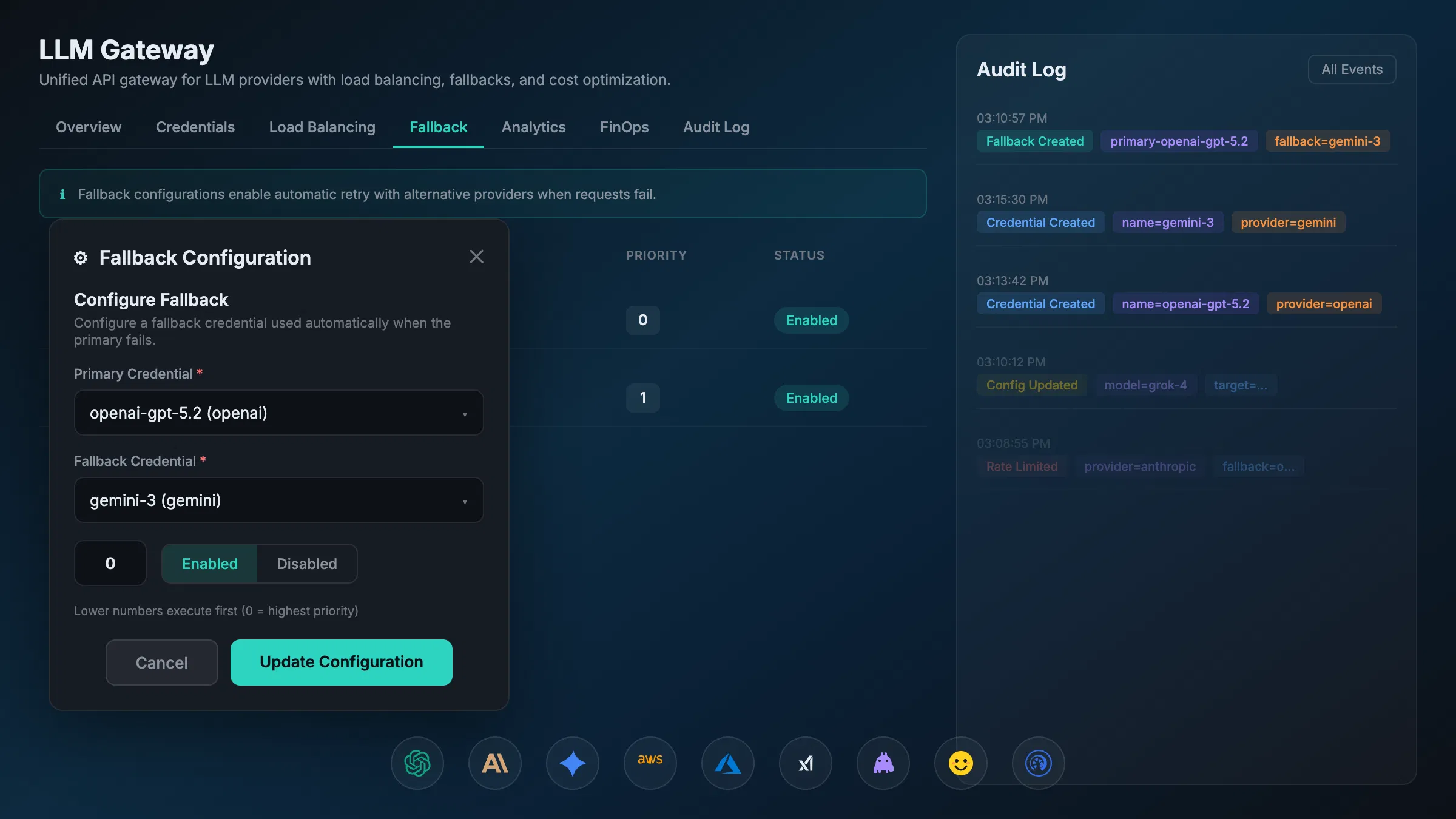

The “Days, not months” philosophy only works if your infrastructure can handle the influx of these new models. If your team starts using GPT-5.4 for computer use, Nemotron for long-context reasoning, and Flash-Lite for the edge, you are suddenly managing a fragmented, high-risk ecosystem.

This is where Shadow AI becomes a liability. When developers or business units spin up these tools independently, you lose visibility into:

- Security: Who is GPT-5.4 “seeing” when it operates a computer?

- Compliance: Does Nemotron’s 1M context window contain PII that violates the EU AI Act?

- Cost: How do you track the AI FinOps across five different providers?

SVG Placeholder: A simple diagram showing a unified AXIOM Gateway sitting between multiple AI Models (OpenAI, NVIDIA, Google) and the Enterprise Apps, with ‘Security’ and ‘Governance’ layers highlighted.

SVG Placeholder: A simple diagram showing a unified AXIOM Gateway sitting between multiple AI Models (OpenAI, NVIDIA, Google) and the Enterprise Apps, with ‘Security’ and ‘Governance’ layers highlighted.

At AXIOM Studio, we believe the solution is a unified governance layer. Whether it is an AI Gateway for managing model access or an MCP Gateway for standardized tool calling, the goal is the same: Sovereignty.

Summary and Takeaways

This week proved that the technical hurdles to autonomous AI are falling.

- NVIDIA and OpenAI have solved the reasoning and context problems.

- Databricks and Microsoft are solving the engineering and debugging problems.

- Google is solving the latency problem.

- Zilliz and Memori are solving the memory problem.

The remaining hurdle is the Governance Problem.

As you look to integrate these launches into your roadmap this month, don’t ask if the tech works: we know it does. Ask if you have the visibility to run it in production. To move from experimentation to execution in “days, not months,” you need a platform that provides a single pane of glass for all AI activity.

The speed of AI is no longer limited by the models. It is limited by your ability to govern them.

Want to learn more about securing these new agentic workflows? Explore our guide on AI Governance Fundamentals or see how our LLM Gateway can provide immediate visibility into your model usage. Get started with AXIOM for free to get started.

Frequently Asked Questions

What makes NVIDIA Nemotron 3 Super different from other LLMs? Nemotron 3 Super uses a hybrid Mamba-Transformer architecture within a Mixture of Experts framework, solving the quadratic scaling problem of traditional LLMs. Its 1-million-token context window and agentic focus make it purpose-built for long-horizon enterprise reasoning tasks like ERP navigation and supply chain analysis.

How does GPT-5.4 computer use change enterprise AI deployment? GPT-5.4 moves beyond API-based interactions to native UI operation — the model can view screens, understand spatial elements, and execute actions like a human user. This enables automation of complex desktop workflows but requires strict governance to control what the model can access.

What is shadow AI and why does it matter with these new model launches? Shadow AI occurs when teams adopt AI tools without centralized IT oversight. With five major model launches in a single week, the risk of ungoverned adoption skyrockets. An AI Gateway provides a single control plane to manage security, compliance, and cost across all providers.

How should enterprises manage a multi-model AI strategy? Rather than locking into a single provider, enterprises should deploy a unified governance layer — such as an AI Gateway or MCP Gateway — that enables instant model switching, centralized logging, and consistent security policies across OpenAI, NVIDIA, Google, and other providers.

How can I start governing these new AI capabilities in my organization? Get started with AXIOM for free to deploy a unified AI governance platform that provides visibility, multi-model management, and compliance controls across your entire AI stack — from frontier models to edge deployments.

Written by

AXIOM Team