From AI Software Developer to AI Software Delivery: SDLC Discipline as the Real Differentiator

The right procurement question isn't whose AI engineer is best. It's whose delivery process is most defensible. Five disciplines + an RFP question bank for buyers.

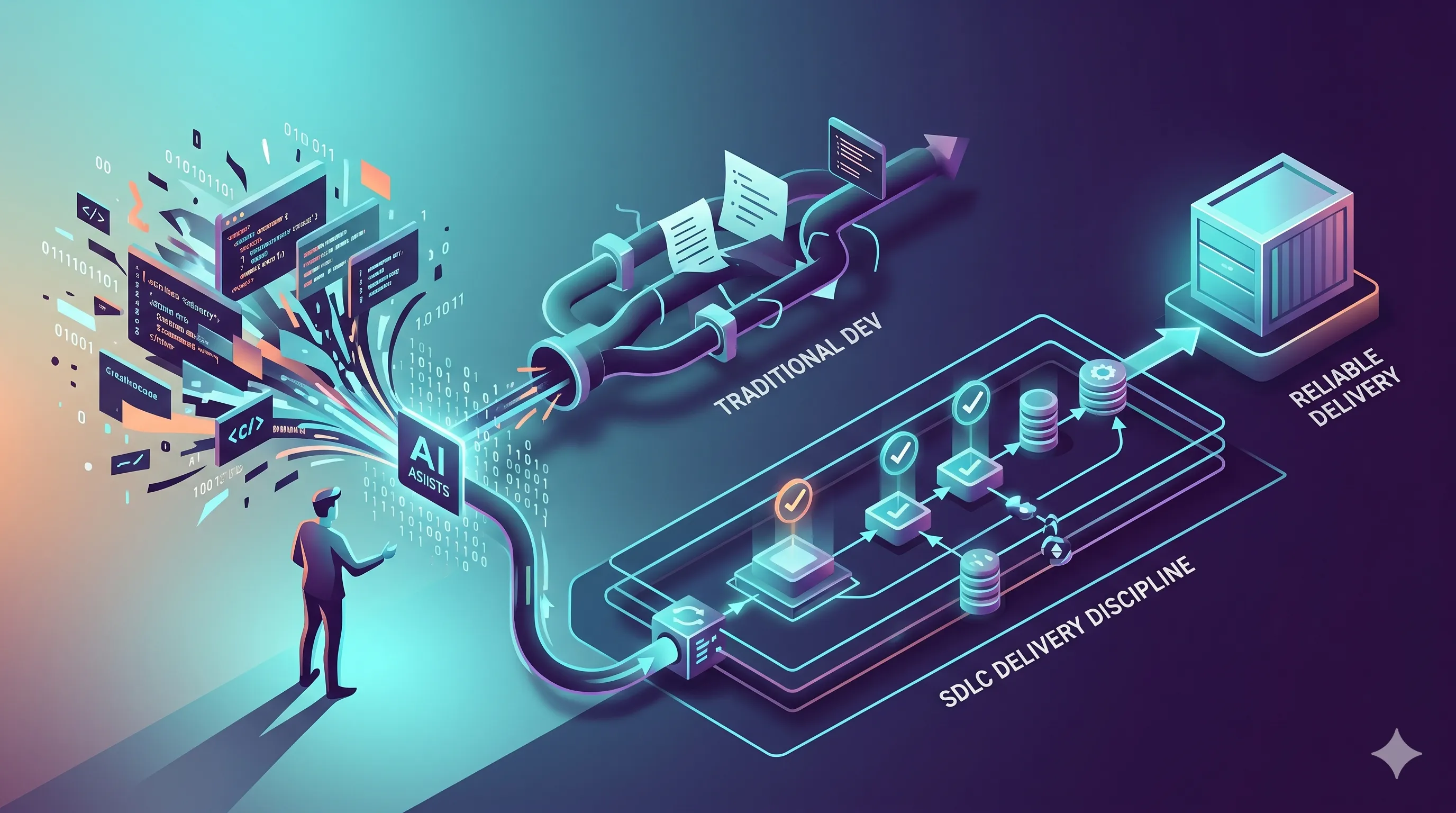

Article 2A made the case that the single-AI-engineer frame silently relocates eight of nine SDLC stages to the buyer’s organisation. Article 2B introduced the agent-team alternative — specialised roles with typed handoffs.

This third article — closing the trilogy — argues that even the agent-team frame isn’t the whole story. The real predictor of enterprise outcomes is not how good the developer agent is, or even how clean the team-of-agents looks. It is how disciplined the delivery process is around all of it. The right unit of comparison is the entire delivery process, not the developer agent.

That sounds abstract. The rest of this article makes it concrete.

The Five Disciplines of AI Software Delivery

Take any AI software-development product, hold it up against five disciplines, and the answer to “is this production-ready for our enterprise?” drops out. The disciplines aren’t novel — they are the same ones a mature software organisation already runs against itself. The novelty is applying them to AI delivery as the procurement criterion.

| # | Discipline | What it means in practice | How it fails when missing |

|---|---|---|---|

| 1 | Design before code | Architecture review is a first-class step, not implicit in coding | Diff-shaped thinking; debt accumulation across cross-cutting changes |

| 2 | Specialist review | Separate eyes for QA, for security, for compliance — not the same agent | Same-mind review; shared blind spots; “tests pass” as the only gate |

| 3 | Evidence on every change | Agent identity, prompt, model version, human approver, control mappings captured automatically | Audit becomes forensics; compliance evidence reconstructed after the fact |

| 4 | Observability + rollback | Once shipped, you can tell what the agent did and undo it cleanly | Production incidents take 3× longer to triage; rollback is manual |

| 5 | Continuous policy enforcement | Guardrails as code (OPA, Cedar) — not memos, not training | Policy drifts from practice; new joiners (or new agents) violate intent |

The five disciplines compose. Discipline 1 (design) makes Discipline 2 (review) effective because reviewers have an artifact to review. Discipline 2 produces the inputs Discipline 3 (evidence) records. Discipline 4 (observability) is what makes the others recoverable when they fail. Discipline 5 (policy) is what keeps the other four from drifting over time.

quadrantChart

title AI delivery products on the five disciplines

x-axis Low coverage --> High coverage

y-axis Bolt-on --> First-class

quadrant-1 Mature platform

quadrant-2 Demo-driven product

quadrant-3 Single-feature tool

quadrant-4 Buyer-assembles

"VibeFlow": [0.85, 0.85]

"Devin": [0.55, 0.45]

"Linear+CI": [0.55, 0.30]

"Cursor+CI": [0.40, 0.25]

"Bare LLM": [0.10, 0.10]The quadrant view is illustrative, not a leaderboard. The point is the axis — products live on a spectrum from “buyer assembles every discipline” to “platform owns every discipline as first-class flow.” Where each product belongs depends on the buyer’s existing infrastructure, not on the demo.

Where “AI Software Developer” Companies Score Lowest

When the framing is “AI software engineer” — a single agent shipping code — Disciplines 1, 2, 3, and 5 are structurally weak by default. Not because the agent is bad. Because a single-agent product is the wrong shape to own those disciplines. The agent can be excellent at coding (Discipline 0, the implicit pre-stage) and still leave the buyer to assemble the other four.

Empirically, this shows up in three patterns:

- Discipline 1 (design) is implicit, not explicit. The agent decides architecture inline; the team has no design doc to review or version.

- Discipline 2 (review) collapses to “the human reviewer.” Whatever the buyer’s PR-review pipeline already is.

- Discipline 3 (evidence) is the agent’s commit + the human’s PR approval. Thin record by audit standards.

- Discipline 4 (observability) is whatever the buyer’s existing telemetry catches, with no agent-side context.

- Discipline 5 (policy) is enforced — if at all — by the surrounding pipeline’s guardrails.

These are not failings of the product. They are failings of the category the product is competing in. A single-agent product will always score thin on Disciplines 1, 2, 3, 5. That’s the structural shape, not the implementation quality.

The Procurement Question Bank

If discipline-based scoring is the right framework, the procurement RFP should ask discipline-aligned questions. Below is a reusable question bank for buyers — one row per discipline, one or two questions a buyer should ask any AI dev platform vendor before signing.

| Discipline | Question to ask the vendor |

|---|---|

| Design before code | ”Show us the design document or specification artifact your platform produces before code is written. Is it versioned? Is it reviewable independently of the code?” |

| Specialist review | ”When the agent that writes the code submits a PR, who or what reviews it adversarially BEFORE the human reviewer? Is that review captured as a separate artifact?” |

| Evidence on every change | ”For every change merged from your platform, list the audit elements captured automatically: agent identity, model version, prompt, human approver, control mappings. Show us a sample audit record.” |

| Observability + rollback | ”When a change shipped via your platform causes a production incident at 3am, what does the on-call engineer see? Can they tell which agent produced it, on what model, with what context, and roll it back as a single action?” |

| Continuous policy enforcement | ”How does your platform enforce policy as code (OPA / Cedar / similar)? Where do policy violations surface, and at which gate are they enforced?” |

A vendor that answers all five with documentation, sample artifacts, and a live demo is selling a delivery platform. A vendor that answers some with “the surrounding pipeline handles that” is selling a feature. Both can be appropriate purchases — the failure is paying delivery-platform prices for feature-shaped value.

Two more questions worth asking, even though they don’t fit neatly into a single discipline. First: “What happens when the agent makes a confident mistake?” Confident mistakes are the dominant failure mode of LLM-based systems and the most expensive class of bug in production. The vendor’s answer should describe an explicit detection-and-rollback path, not a vague “we have safeguards.” Second: “How do you price as our agent volume scales?” AI delivery cost scales with token volume, parallel sessions, and gateway through-put. A vendor that prices per-seat without acknowledging volume scaling will surprise the buyer at month six. The honest answer references token-spend metering and per-tenant quotas — see Integrating AI Agents into Your Existing DevOps Pipeline for the operational shape.

Connecting the Two Series

This trilogy and the platform-comparison trilogy (Series 1A landing, 1B compliance, 1C integrations) reinforce each other. Series 1 walks specific platforms against specific criteria. Series 2 walks the framing question.

The cross-link: the Series 1 criteria framework (compliance posture, integration depth, branch management) is the operationalisation of the Series 2 disciplines. Discipline 3 (evidence) is operationalised in Series 1B’s gate-by-gate analysis. Discipline 5 (policy enforcement) is operationalised in Series 1C’s branch-management posture. The two series are the same argument from two angles — buy the delivery process, not the developer agent.

Useful prior reading on the same theme: AI Governance Maturity Model, Building an AI Audit Trail, Quality Gates for AI-Generated Code, and Agent Workflows in Enterprise Software Development.

The Axiom Argument

VibeFlow’s positioning is straightforward against this framework. The platform’s structure makes Disciplines 1–5 first-class:

- Discipline 1 — Architect persona produces a design before the developer persona writes code; the design is a versioned artifact attached to the work item.

- Discipline 2 — QA Lead and Security Lead personas review the developer’s diff with their own priors and produce separate artifacts; review independence is structural.

- Discipline 3 — Every work item carries the agent identity, model, prompt, human approver, and compliance tags as part of the audit record.

- Discipline 4 — Per-todo branch + commit attribution + execution log lets the on-call engineer trace any production change back to the specific agent and prompt that produced it.

- Discipline 5 — Policy is enforced at the gateway layer (LLM Gateway, MCP Gateway, A2A Gateway) with OPA-style rules, not at the application layer.

Pair that with AI Studio for visual workflow design, and the procurement story is “five disciplines, all first-class, on open protocols underneath.” The platform’s bet is that the discipline-fit is the differentiator — and over a 12-to-24-month horizon, that bet is the one we expect to win on enterprise buyers’ RFPs.

The Real Differentiator

Pick the platform whose delivery-process discipline matches the audit posture, integration footprint, and operational maturity your team actually has. Don’t pick on demo cleverness. Don’t pick on whether the AI-software-engineer pitch sounds confident. The right question is whether five years from now, when this platform’s output is in your production-incident postmortems and your auditor’s spreadsheets, the answer to “where is the evidence?” is short or long.

If the answer is short — three sentences and a link to the audit record — you bought the right platform. If the answer is long, with footnotes about which subsystem owns which piece, you bought the demo.

Engineering leaders making this call: read the role-aligned framings at /for/engineering-leaders, /for/ctos, /for/cisos, and /for/platform-teams. Compliance-side at /compliance/soc-2 and /compliance/nist-ai-rmf.

This series is done. Six articles across two trilogies — the platform comparison and the framing trilogy — make the case in one place. The next step is yours: start free with VibeFlow, or if you want to talk through which discipline-fit makes sense for your team, the VibeFlow product page has the demo and the contact paths.

Written by

AXIOM Team