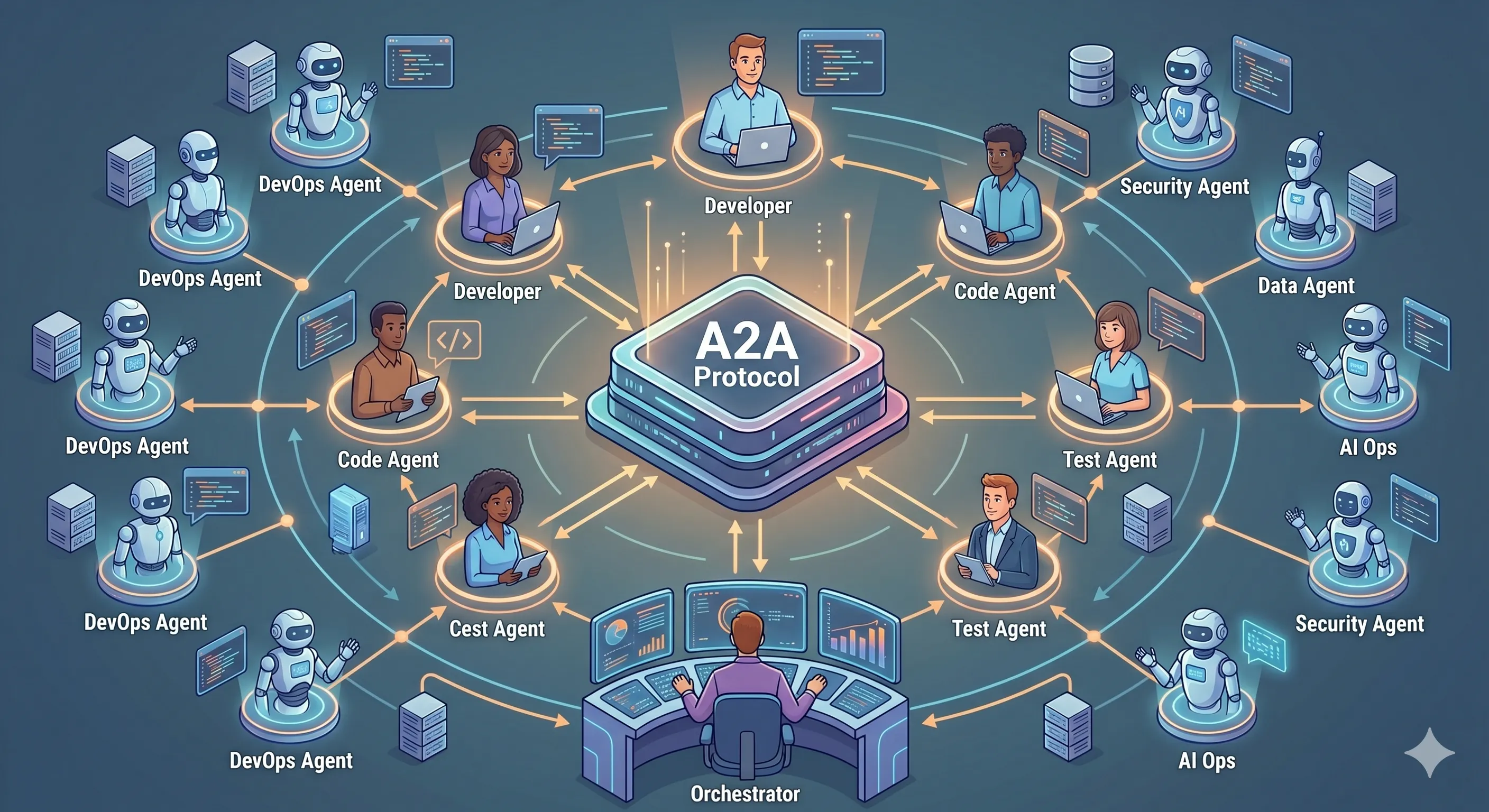

The A2A Protocol: Multi-Agent Orchestration for Software Teams

Agents have a tools protocol (MCP) and a teamwork protocol (A2A). Here's how A2A makes specialized AI agents from different vendors and teams actually cooperate on software work.

You can build a specialized agent. You can build a team of specialized agents. The minute you try to combine specialized agents from different vendors — your CI agent from one platform, your security review agent from another, your architecture agent built in-house — you discover that “agent” is not a protocol. Each one has its own input format, its own way of describing what it can do, its own opinion about what “task complete” means. Glue code multiplies. Audit boundaries blur. Nothing scales.

The Agent2Agent Protocol (A2A), open-sourced by Google in April 2025 and now governed by the Linux Foundation, is the missing convention that turns “agents from different worlds” into “agents on the same team.” The official spec lives at a2a-protocol.org and the reference implementations are on GitHub. For enterprise software development — where the agents you actually want to coordinate were almost certainly built by different teams, in different stacks, with different release cadences — A2A is the protocol that makes a multi-agent stack maintainable.

What A2A Actually Is

A2A is a JSON-RPC 2.0 protocol over HTTPS for communication between agents. Three primitives carry the whole protocol:

- Agent Card. A small JSON manifest, typically served at

/.well-known/agent.json, that declares an agent’s identity, endpoints, supported skills, authentication requirements, and protocol version. Agent cards are the “I exist and here’s what I do” advertisement that makes discovery possible. - Tasks. A task is the unit of work an agent accepts. Each task has a stateful lifecycle (

submitted→working→input-required|completed|failed|canceled) and carries typedmessagesbetween client and server. - Artifacts and parts. Outputs are typed artifacts composed of parts — text, structured data, files, references. The receiver knows how to interpret an artifact because the type is declared, not inferred.

sequenceDiagram

participant C as Client Agent

participant R as Registry

participant S as Server Agent

C->>R: GET /.well-known/agent.json (discover)

R-->>C: Agent Card (skills, auth, endpoints)

C->>S: tasks/send (task with messages)

S-->>C: task.status = working

S-->>C: task.status = input-required

C->>S: tasks/send (clarification)

S-->>C: task.status = completed + artifactsThe point of the lifecycle is that long-running, multi-turn work is a first-class shape. The server can pause for input, stream incremental updates, or be canceled cleanly — all through the same task object the client is already polling or subscribing to.

Three transport options round out the protocol. tasks/send is the synchronous request-response shape for short tasks. tasks/sendSubscribe opens a Server-Sent Events stream so the client receives working, input-required, and partial-artifact events as they happen — useful when an architect agent is iteratively producing a long design document. And the pushNotifications capability lets the server agent call back to a client-supplied webhook for tasks that may run for hours, freeing the client from holding a connection open. The same task ID stitches the three shapes together, so a client can begin with a stream, switch to webhooks if the task is long, and still treat the artifact as the same logical unit of work.

A2A vs MCP — They Are Complementary, Not Competitive

Anthropic’s Model Context Protocol (MCP) and Google’s A2A solve adjacent problems and the boundary is sharp.

| Property | MCP | A2A |

|---|---|---|

| Connects | Agent ↔ tools/data | Agent ↔ agent |

| Counterparty | Stateless tool server | Stateful peer agent |

| Unit of work | Tool call | Task (with lifecycle) |

| Discovery | Server lists tools | Agent Card |

| Transport | JSON-RPC (stdio, HTTP, SSE) | JSON-RPC over HTTPS |

| Maintained by | Anthropic + community | Linux Foundation |

The clean way to read it: MCP gives an agent capabilities; A2A gives an agent teammates. A real production system uses both. The architect agent uses MCP to read a repo; it uses A2A to delegate the implementation slice to a developer agent that may have been built by an entirely different team. We covered the MCP side in depth in MCP Architecture: The Enterprise Integration Pattern for AI Coding — A2A is the other half of the same picture.

What Multi-Agent Software Development Looks Like With A2A

Three high-leverage patterns map cleanly onto A2A.

- Coordinated code review. A reviewer agent receives a PR. It calls A2A skills on (a) a security review agent for SAST findings, (b) a test-quality agent for mutation-testing results, (c) a compliance agent for control-mapping evidence. Each result returns as a typed artifact. The reviewer composes a single review comment from typed inputs instead of stitching together free-text outputs from incompatible tools.

- Cross-team agent delegation. Your platform team owns the database-migration agent. Your product team’s feature agent needs a migration. With A2A, the feature agent discovers the migration agent’s card, calls its skill with a typed schema, and receives back the migration script as an artifact — all without either team owning the other team’s runtime.

- Automated architecture review. A senior architect agent receives proposed designs from feature agents across multiple teams. It returns recommendations as artifacts that downstream developer agents can consume directly — the loop closes without a human typing summaries between systems.

Each of these is technically possible without A2A, but in practice each pair of agents requires a custom adapter, and adapter sprawl is what kills internal multi-agent ambitions. A2A converts the n² adapter problem into an n-agent-card problem.

Concretely: a feature implementation session that used to require hand-stitched glue now reads as a sequence of typed A2A calls. The product agent files a task on the architect agent’s card and gets back a design-doc artifact. The architect agent files a task on the developer agent’s card and passes the design doc as an input artifact. The developer agent’s task produces a diff artifact, which the QA agent’s task consumes to produce a test-report artifact, which the security agent’s task consumes to produce an audit-record artifact. Every handoff is a typed payload with a stable task ID; every step’s output is the next step’s input; and the entire chain is reconstructable from the task graph. That same chain shape is what we walked through from the workflow-design angle in Agent Workflows in Enterprise Software Development — A2A is what makes those workflows portable across vendor boundaries.

Security and Governance — Where Enterprises Actually Decide

Protocol elegance is necessary but not sufficient. The questions an enterprise architecture review will ask of any A2A deployment are operational, and A2A’s spec gives you the right primitives without prescribing the answers.

Authentication. A2A defers to standard web auth — agent cards declare the schemes they accept (OAuth 2.0, API keys, mTLS). The discipline is: every inter-agent call carries an identity and a scope, never an unbounded session token. The same threat model applies that we walked through for autonomous code generation in The CISO’s Guide to AI Agent Security.

Authorization. Skills on the agent card are the authorization unit. A client agent allowed to call migration.preview is not necessarily allowed to call migration.apply. Encode the policy in the gateway, not in the agent’s prompt — prompts are not a security boundary.

Audit trail. Every task carries a stable ID; every state transition is loggable. Capture: caller identity, callee skill, task ID, message contents, artifacts emitted, final status. This record is what becomes SOC 2 and NIST AI RMF evidence — not “we used AI agents,” but “this specific task executed by these specific agents produced this specific artifact.”

Rate and cost controls. Multi-agent deployments compound token costs. The gateway is where you enforce per-agent and per-tenant quotas, log spend, and reject runaway delegation chains.

flowchart LR

A[Client Agent] --> G[A2A Gateway]

G --> |authn/authz| P[Policy Engine]

G --> |audit| L[Log Store]

G --> |rate/cost| Q[Quota Service]

G --> S1[Server Agent 1]

G --> S2[Server Agent 2]

G --> S3[Server Agent 3]The gateway is not a single point of failure if you treat it as infrastructure: stateless, horizontally scalable, with the same operational maturity you’d apply to any internal API gateway. Protocol-level governance on top of an open protocol is the deployable shape.

Axiom’s A2A Gateway

Axiom’s A2A Gateway is exactly the gateway shape above, configured for the enterprise software development stack. Agents register their cards; the gateway handles authentication, scope-based authorization, audit logging, and per-tenant cost controls. It pairs with our MCP Gateway (for agent-to-tool) and AI Studio (for declaring multi-agent workflows) so the full stack reads as one platform — but each component is independently useful, and the protocols underneath are open. Platform teams running this in production can read the operational model at /for/platform-teams.

What it gives you in practice: drop a new agent in the registry, declare its skills in the card, and existing client agents discover it without redeploys. The “team of agents” becomes an operational concept your platform team can actually own — like a service mesh, but for agents.

Pick the Protocol, Then Pick the Platform

A2A is in the same category of decision as picking HTTP, gRPC, or Kafka — choose the protocol once, and every later integration becomes cheaper. Pick the wrong shape and every new agent costs you a custom adapter forever. The protocol is open, the spec is mature, and the reference implementations are good enough to start from. Adopt the convention; let the gateway handle the operational concerns.

Ready to coordinate agents across teams? Explore the A2A Gateway, pair it with the MCP Gateway, and design the workflows in AI Studio. Or start free.

Written by

AXIOM Team