Weekly AI Command: The Recap (March 15, 2026)

The pace of AI development is no longer measured in months or quarters. It is measured in days. This week alone, we witnessed the release of two frontier-grade models, a geopolitical standoff involving the world’s most advanced LLMs, and a hardware pivot that signals a shift in the global compute...

The pace of AI development is no longer measured in months or quarters. It is measured in days. This week alone, we witnessed the release of two frontier-grade models, a geopolitical standoff involving the world’s most advanced LLMs, and a hardware pivot that signals a shift in the global compute economy.

For the enterprise, these are not just headlines. They are operational risks and opportunities that require immediate governance. At AXIOM Studio, we track these shifts so you can maintain execution without sacrificing security.

Welcome to the first edition of the Weekly AI Command.

The Frontier Push: GPT-5.4 and the Excel Integration

OpenAI moved fast this week. Following the quiet release of GPT-5.3 Instant earlier in the month, they have officially launched GPT-5.4. This is a model designed for professional execution, featuring a 1-million-token context window and native “computer-use” capabilities.

The standout update for the enterprise is the deep-level integration with Microsoft Excel. This is not just a plugin; it is a native reasoning layer. GPT-5.4 can now perform complex financial modeling, cross-reference massive datasets, and generate pivot tables through natural language: all within the spreadsheet itself.

While the productivity gains are obvious, the governance implications are severe. Moving sensitive corporate data into an AI-driven spreadsheet creates new vectors for shadow AI. If your team is using these features without a centralized LLM Gateway, you have lost visibility into what data is leaving your perimeter.

The Geopolitical Rift: Anthropic vs. The Pentagon

The standoff between the U.S. government and Anthropic reached a breaking point this week. The administration has ordered federal agencies to cease the use of Claude models, following Anthropic’s refusal to lift restrictions on autonomous weapons targeting and specific surveillance use cases.

Despite this friction, Anthropic released Claude 4.6 Sonnet. It is an impressive model, yet its utility in the public sector is now effectively neutralized.

This creates a massive vacuum. Google was quick to fill it, announcing an expansion of its partnership with the Department of Defense (DoD) alongside the release of Gemini 3.1. For enterprise leaders, the takeaway is clear: model sovereignty is the new corporate priority.

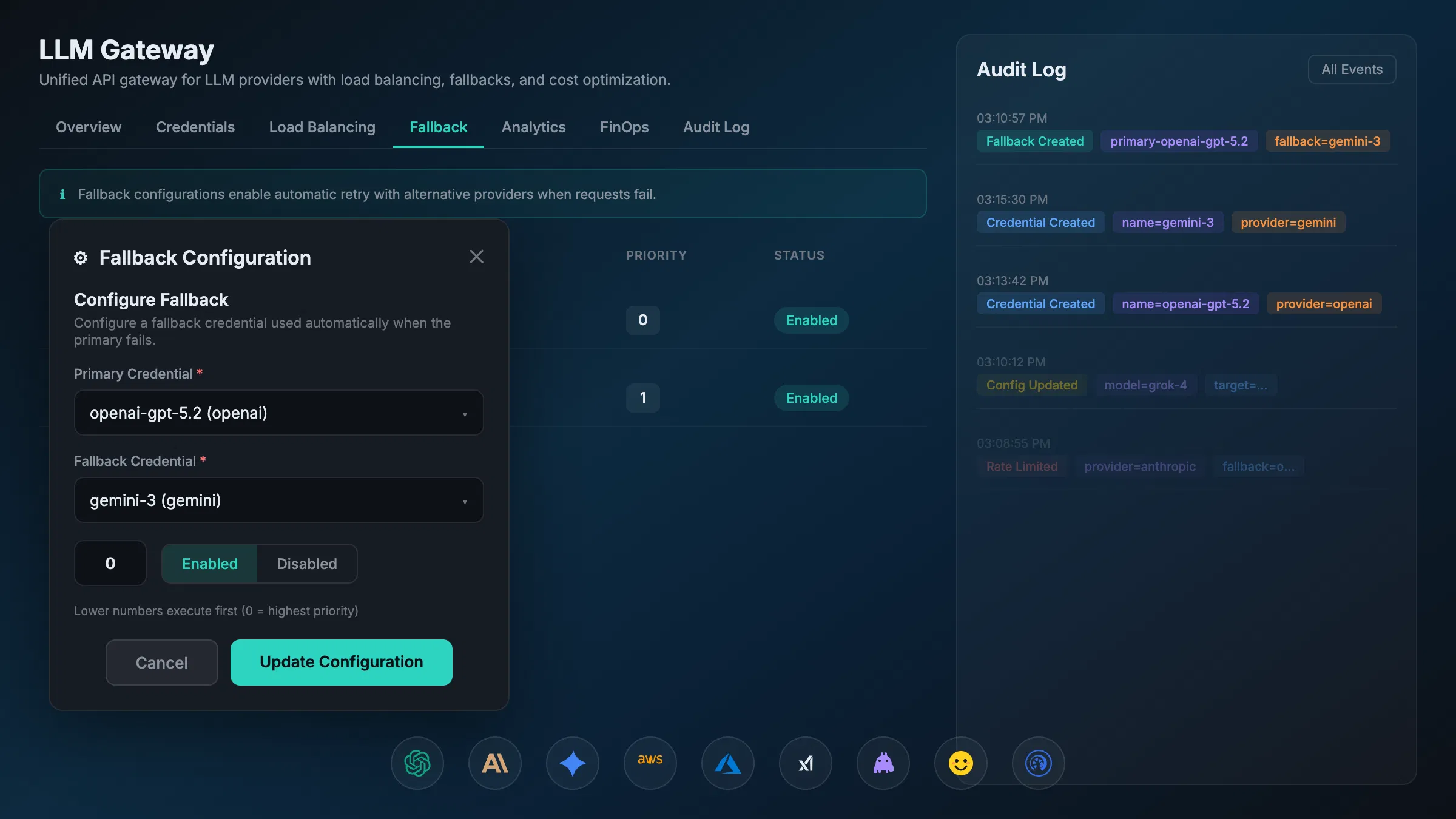

Relying on a single provider is a strategic failure. If your infrastructure is locked into a provider that falls out of favor with regulators or government entities, your operations stall. We recommend a multi-model strategy managed through a robust AI Gateway to ensure business continuity.

Infrastructure Diversification: Meta and AMD

For years, Nvidia has held a virtual monopoly on the compute required to train and run frontier models. This week, Meta signaled the beginning of the end for that era. Meta announced a massive hardware deal with AMD, shifting a significant portion of its inference workload to AMD’s latest Instinct accelerators.

This move is about more than just cost: it is about supply chain resilience. As an enterprise, your AI FinOps strategy must account for these shifts. Hardware diversification leads to more competitive token pricing and localized compute options.

The Security Wake-up Call: GitHub and Terraform

The “move fast and break things” mentality of vibecoding had a rough week. A sophisticated malware campaign targeted GitHub repositories, using AI-generated code snippets to inject backdoors into popular open-source libraries.

Simultaneously, a massive misconfiguration led to a “Terraform database wipe” at a major cloud provider, highlighting the dangers of autonomous agents operating without human-in-the-loop oversight.

We see this as a validation of our stance: AI cannot be left to “vibe” its way through production environments. It requires AI Observability and strict AI Compliance frameworks.

Why “Vibecoding” Is a Risk to Your Operations

The term “vibecoding” has gained traction lately: describing a world where developers use AI to build applications based on “vibes” rather than rigorous architecture. This week’s security incidents prove why this is a liability.

When agents interact with your infrastructure: whether via the Model Context Protocol or custom integrations: they must do so within a governed environment.

At AXIOM Studio, we developed VibeFlow to bridge this gap. It allows for the rapid experimentation of vibecoding but wraps it in the security, logging, and auditability required by the enterprise.

A Summary of Command

As we look toward the next week, the trends are undeniable:

- Agentic Capabilities are Native: OpenAI and Anthropic are no longer just chatbots; they are operating systems. Your Agentic AI Development needs to start now, but it must be governed.

- Regulation is Bifurcating: The split between Anthropic and the DoD shows that “safety” means different things to different stakeholders. You need the flexibility to swap models instantly.

- Security is Non-Negotiable: AI-generated malware is real. AI Security must be baked into the gateway level, not added as an afterthought.

Take Action This Week

If your organization is currently using AI without a centralized control plane, you are operating in the dark. The events of March 8-15 prove that the landscape changes too quickly for manual oversight.

- Audit your LLM usage: Identify where GPT-5.4 is being used within Excel and who has access.

- Diversify your providers: Ensure you have access to Gemini 3.1 and Claude 4.6 as fallbacks for one another.

- Implement a Gateway: Use an A2A Gateway to manage agent-to-agent communications securely.

The goal isn’t just to use AI. The goal is to command it.

Execution matters. Governance makes it possible. We will see you next Sunday for the next recap.

For a deeper dive into how to secure your infrastructure against these emerging threats, visit our guide on AI Governance Fundamentals. Ready to take control? Get started with AXIOM for free today.

Frequently Asked Questions

What are the enterprise risks of GPT-5.4’s Excel integration? GPT-5.4’s native Excel integration creates new shadow AI vectors by enabling AI-driven financial modeling directly in spreadsheets. Without a centralized LLM Gateway, organizations lose visibility into what sensitive corporate data is being processed by the model.

Why does model sovereignty matter for enterprise AI strategy? The Anthropic–Pentagon standoff demonstrates that relying on a single AI provider is a strategic vulnerability. If your provider falls out of regulatory or governmental favor, your operations can stall overnight. A multi-model strategy with instant failover is essential.

How does AI-generated malware affect enterprise security posture? AI-generated code injection campaigns, like the one targeting GitHub repositories this week, can embed backdoors in open-source dependencies your teams rely on. Enterprise AI security must include gateway-level scanning and strict compliance frameworks.

What is shadow AI and why is it dangerous for enterprises? Shadow AI occurs when teams adopt AI tools without centralized oversight. This creates blind spots in security, compliance, and cost management. An AI Gateway provides a single control plane to monitor and govern all LLM usage across the organization.

How can I protect my organization from these emerging AI threats? Get started with AXIOM for free to implement enterprise-grade AI governance with centralized visibility, multi-model failover, and real-time compliance monitoring across your entire AI stack.

Written by

AXIOM Team