From Cursor to Copilot: The Enterprise Guide to Governing Agentic Coding Tools

Your developers are shipping code written by agents. Not suggested by AI: written, tested, and committed by autonomous systems that navigate your codebase, execute terminal commands, and fix their own bugs.

Your developers are shipping code written by agents. Not suggested by AI: written, tested, and committed by autonomous systems that navigate your codebase, execute terminal commands, and fix their own bugs.

This isn’t next year’s problem. It’s happening now.

The tools evolved faster than the governance frameworks. GitHub Copilot started as autocomplete on steroids. Cursor and its peers became autonomous coding agents. The difference matters more than most CTOs realize.

The Line Between Assistant and Agent

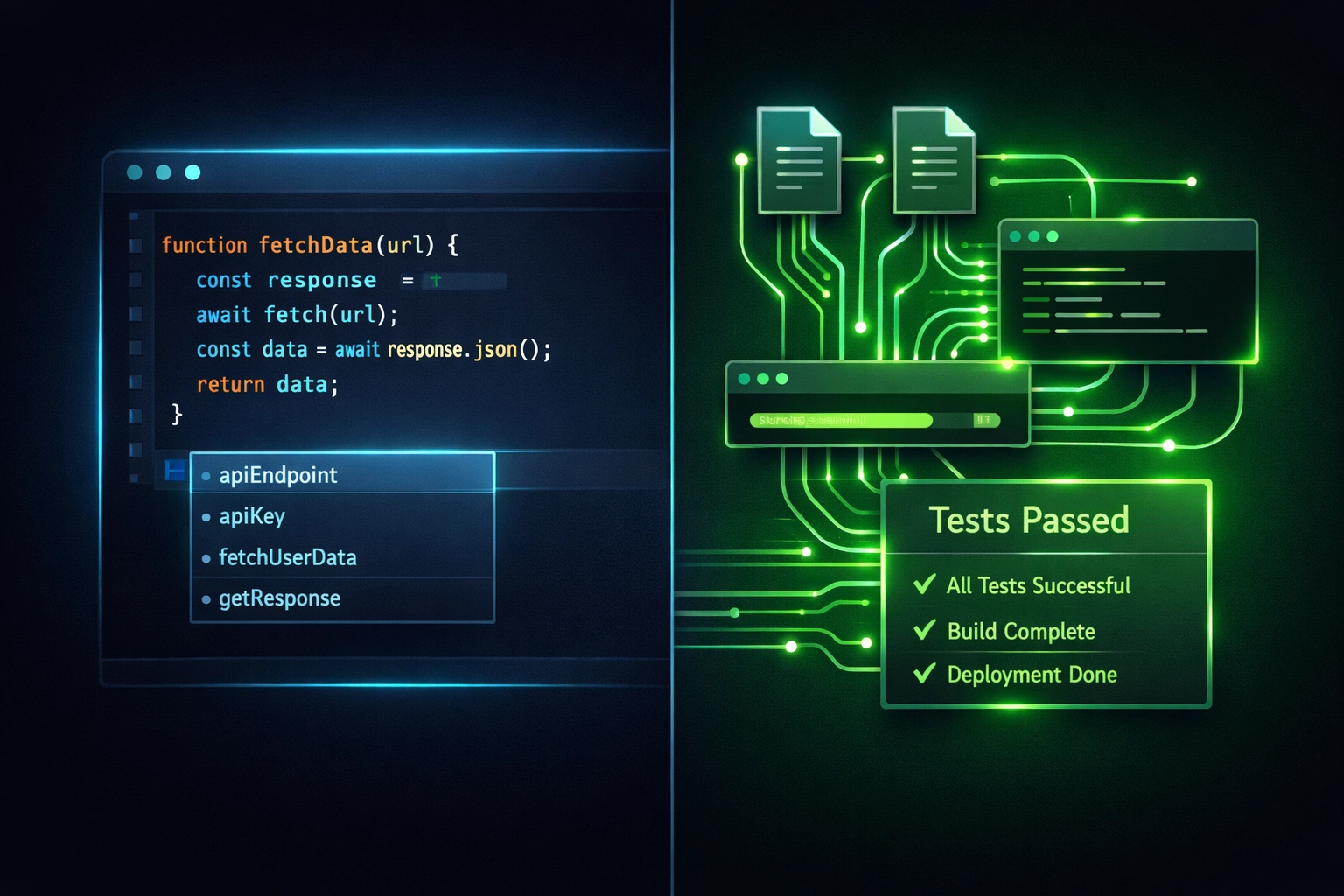

Copilot suggests. Agents execute.

That distinction changes everything about how enterprises need to think about AI in the development lifecycle. Traditional coding assistants present options. You review, accept, or reject. The human stays in the driver’s seat for every decision.

Agentic tools autonomously complete entire tasks. They plan multi-file changes, navigate directory structures, run tests, interpret error messages, and iterate until the job is done. The human defines the objective. The agent figures out the path.

This shift mirrors what we’ve seen before: virtualization, cloud migration, containerization. Each time, the industry moves from manual control to autonomous orchestration. Each time, enterprises scramble to retrofit governance onto tools built for speed, not oversight.

What Actually Changes (And Why It Matters)

When an agent has file system access and command execution privileges, it operates with the same technical capabilities as a developer. Not similar capabilities. The same ones.

That means governance requirements escalate immediately. You wouldn’t give a new hire unrestricted access to production codebases on day one. You shouldn’t give it to an AI agent either: even if that agent lives in your senior engineer’s IDE.

The risks compound fast:

- Agents commit code without human review loops

- Autonomous systems access proprietary data and business logic

- Generated code might violate licensing, compliance, or security policies

- Organizations lose visibility into what code came from where

- Audit trails vanish when agents work in local environments

Traditional copilot deployments operated per-user, per-IDE. Experimental. Contained. Agentic systems require treating AI as shared infrastructure: production-grade platforms with enterprise controls, not developer tools that happen to use AI.

The Four Pillars of Agentic Governance

Enterprises governing agentic coding tools successfully build on four foundational capabilities.

Infrastructure control comes first. Agents must execute inside enterprise networks with direct access to actual codebases, production data schemas, and organizational policies. Cloud IDEs disconnected from your real environment produce code that compiles in theory but fails in practice. This means deploying agents that interact with your Git repositories, CI/CD pipelines, and testing frameworks: not isolated playgrounds.

Comprehensive observability comes next. Every agent action needs logging, auditability, and reversibility. Who triggered which agent? What files did it modify? Which APIs did it call? What reasoning led to each decision? Without audit trails, you’re flying blind when something breaks or compliance questions arise. Leading governance platforms provide context-aware risk scoring, role-based access controls, and real-time monitoring out of the box.

Structured objectives prevent chaos. Agentic systems need precise task definitions, success criteria, and validation frameworks. Vague instructions produce unpredictable outputs. Clear boundaries and organizational policy enforcement: through guardrails that restrict unsafe behaviors: keep agents productive without introducing unmanaged risk.

Integration validates everything. Output should be verified, ready-to-commit code that already passed compilation, testing, and validation. Not drafts requiring human debugging. This demands tight integration with your actual development workflows: Git, CI/CD, automated test suites. These aren’t optional enhancements. They’re prerequisites for safe agentic operation.

Governance Architecture That Actually Works

The difference between managed chaos and controlled deployment shows up in architectural decisions.

Organizations winning at agentic governance deploy managed configuration files to system directories, controlling which models, servers, and integrations developers can access. This prevents shadow AI sprawl: developers spinning up unapproved tools that bypass enterprise controls.

Governance add-ons separate visibility from velocity. Platform and security teams get auditable controls over AI usage without disrupting developer workflows. Developers keep their speed. Security keeps their sanity.

AI governance dashboards provide full visibility into agent decisions, actions, and performance. This enables enterprises to trace end-to-end workflows, monitor reasoning processes, and ensure compliance with GDPR, HIPAA, ISO, and industry-specific regulations. Not just checkbox compliance: actual operational readiness when auditors come knocking.

Multi-agent orchestration takes this further. Advanced platforms manage parallel agent exploration, comparing outputs algorithmically to identify optimal results while maintaining transparency about decision-making processes. You get better code and better visibility.

How Development Work Actually Changes

The center of gravity moves from the IDE to the orchestration layer.

This isn’t a minor UX shift. It fundamentally reshapes how development work gets organized. The valuable work becomes defining what needs building, orchestrating agents to build it, validating their output, and selecting the best results.

The IDE transitions from primary workspace to supporting tool: used when inspecting agent output or making targeted manual refinements. Developers spend less time writing boilerplate and more time on architectural decisions, business logic validation, and quality assurance.

This architectural shift enables enterprises to tackle projects that were previously impractical:

- Converting legacy RPG and COBOL systems at scale

- Refactoring monolithic database architectures

- Migrating frameworks across hundreds of microservices

- Standardizing security patterns across disparate codebases

But only if governance keeps pace. Speed without control creates technical debt at AI scale: faster than human developers ever could.

What Sovereignty Actually Means

Data sovereignty isn’t buzzword compliance theater. It’s operational necessity.

When agents process your code, they process your intellectual property, business logic, customer data schemas, and competitive advantages. Where that processing happens, who has access, and what audit trails exist determine whether you maintain sovereignty over your most valuable assets.

Cloud-based coding assistants send your code to external servers. On-premise or VPC-deployed agents keep everything inside your infrastructure. The difference matters for regulated industries, competitive positioning, and actual: not theoretical: data control.

AXIOM Studio’s approach to governance starts here: visibility into every AI interaction, sovereignty over where processing happens, and monitoring that works across all your AI tools: not just coding agents. Because governance that only covers one tool creates gaps. Gaps create risk.

The Path Forward

Agentic coding tools are production infrastructure now, not experimental developer perks.

Treat them that way. Deploy governance before problems compound. Build observability into your architecture from day one. Maintain sovereignty over your code and data.

The enterprises that figure this out early gain sustainable competitive advantages: faster development cycles without sacrificing control. The ones that don’t will spend 2027 retrofitting governance onto systems already embedded throughout their engineering organizations.

We’ve seen this pattern before. The winners moved decisively while the technology was still emerging, not after the problems became undeniable.

The shift from suggestion to autonomous execution is here. Your governance framework should be too.

Frequently Asked Questions

What is the difference between a coding assistant and an agentic coding tool? A coding assistant like traditional GitHub Copilot suggests code completions for a developer to accept or reject. An agentic coding tool like Cursor or Copilot Workspace autonomously plans multi-file changes, executes terminal commands, runs tests, and iterates on errors without human intervention for each step. This distinction changes governance requirements because agents operate with the same technical capabilities as a developer.

What risks do agentic coding tools introduce that traditional assistants do not? Agentic tools can commit code without human review, access proprietary business logic and data schemas, execute arbitrary shell commands, and produce outputs that violate licensing or compliance policies. Because agents work autonomously across files and systems, audit trails can vanish and organizations lose visibility into what code originated from AI versus human developers.

How should enterprises handle data sovereignty with AI coding agents? Enterprises should deploy agents on-premise or within their own VPC so that source code, intellectual property, and customer data schemas never leave the organization’s infrastructure. Cloud-based coding assistants send code to external servers, which creates unacceptable risk for regulated industries. Governance platforms like AXIOM provide visibility into where processing happens and enforce sovereignty policies across all AI tools.

What are the four pillars of agentic coding governance? The four pillars are infrastructure control (agents run inside enterprise networks with access to real codebases), comprehensive observability (logging every agent action with auditability and reversibility), structured objectives (precise task definitions with success criteria and guardrails), and integration validation (output verified through Git, CI/CD, and automated test suites before it reaches production).

How do I prevent shadow AI from agentic coding tools spreading across my organization? Deploy managed configuration files to system directories that control which models, servers, and integrations developers can access. Use a governance platform that provides role-based access controls, context-aware risk scoring, and real-time monitoring. This lets platform and security teams maintain auditable controls without disrupting developer velocity. Get started with AXIOM for free to see this in practice.

Ready to govern agentic coding tools at enterprise scale? Get started with AXIOM for free and get full visibility, control, and compliance for your AI-driven development workflows.

Written by

AXIOM Team