What is OpenClaw? An Executive Overview & Governance Guide

OpenClaw is the fastest-growing open-source AI agent runtime, surpassing 250K GitHub stars. This executive guide covers its architecture, shadow AI risks, and how enterprises can govern local autonomous agents.

![[HERO] What is OpenClaw? An Executive Overview & Governance Guide](https://cdn.marblism.com/c1tKhAIC-bf.webp)

The landscape of enterprise productivity is shifting from centralized cloud assistants to local, autonomous agents. At the center of this movement is OpenClaw. What began as a solo project by Austrian developer Peter Steinberger — formerly known as Clawdbot and Moltbot — has exploded into a global phenomenon, surpassing 250,000 GitHub stars in roughly 60 days to become the most-starred software project on GitHub, overtaking React.

OpenClaw represents a fundamental change in how software interacts with human workflows. It is not just another chatbot interface; it is a self-hosted agent runtime. It lives on the user’s hardware, maintains its own memory, and executes tasks across dozens of communication channels. For the individual contributor, it is the ultimate force multiplier. For the enterprise executive, it is the emergence of a new frontier: the localized Shadow AI stack.

The Anatomy of an Autonomous Local Agent

OpenClaw functions as a local gateway. It interprets intent through Large Language Models (LLMs) like Claude, DeepSeek, or OpenAI’s GPT models, but the execution happens entirely within the user’s local infrastructure. This “local-first” architecture is designed for speed and data sovereignty.

Three core pillars define its capability:

- Persistent Memory: Unlike standard LLM sessions that reset with every new window, OpenClaw maintains a long-running context. It stores conversation history and user preferences as local Markdown documents through its Memory.md framework. This allows the agent to learn a user’s specific style, recurring deadlines, and project nuances over months of interaction.

- Multi-Channel Integration: The agent is not confined to a browser tab. It operates across 50+ integrations, including Slack, Discord, WhatsApp, Telegram, and Signal. It monitors these channels, identifies tasks, and provides proactive assistance without requiring the user to switch contexts.

- Skill-Based Execution: Through its “AgentSkills” framework — which now includes over 100 preconfigured skills and thousands of community-contributed extensions — OpenClaw can execute shell commands, manage local file systems, and control web browsers. It can pull data from a spreadsheet, summarize a Slack thread, and draft a response in an email client autonomously.

The Hardware Catalyst: AMD’s RyzenClaw and RadeonClaw

The barrier to running sophisticated agents locally was historically the lack of accessible compute. That barrier is rapidly falling with AMD’s RyzenClaw and RadeonClaw hardware reference configurations. RyzenClaw is built around the Ryzen AI Max+ processor with 128GB of unified memory, optimized for large context windows and multi-agent workflows. RadeonClaw leverages the Radeon AI PRO R9700 discrete GPU with 32GB of VRAM, delivering up to 120 tokens per second for high-throughput inference. Both configurations run models locally via LM Studio and the llama.cpp backend.

While these hardware paths represent a significant step toward local AI agent deployment, they remain targeted at developers and early adopters — a RyzenClaw system starts at approximately $2,700, and the RadeonClaw GPU retails for about $1,299. As these costs come down and consumer hardware catches up, the economic incentive will shift further; enterprises will no longer need to pay high per-token costs for simple routing tasks that can be handled locally. However, this growing accessibility accelerates the proliferation of unmanaged agents within the corporate firewall.

The Shadow AI Conflict

We have seen this cycle before. When employees find tools that make them 10x more productive, they bypass IT bottlenecks to use them. OpenClaw is the new “Bring Your Own Device” (BYOD) challenge, but with the added complexity of autonomous agency.

When an employee runs OpenClaw locally, they are essentially running an autonomous bot that has access to corporate Slack channels, internal files, and potentially sensitive credentials. This creates a massive governance gap. Security researchers have already flagged serious concerns: Cisco found that third-party OpenClaw skills could perform data exfiltration and prompt injection without user awareness, and Gartner analysts described the agent’s design as “insecure by default.”

Without oversight, these agents can:

- Exfiltrate PII (Personally Identifiable Information) into local logs or external LLM providers.

- Execute unintended system commands in an attempt to solve a complex prompt.

- Violate regional regulations like the EU AI Act, which requires strict transparency and risk management for autonomous systems.

Enterprise leadership cannot simply ban these tools — the productivity loss would be too great. Instead, the strategy must shift toward Controlled Execution.

From Experimentation to Controlled Execution

While OpenClaw is excellent for individual experimentation, it lacks the scaffolding required for an enterprise-grade environment. This is where AXIOM Studio bridges the gap. We transform the “wild west” of local agents into a governed, observable ecosystem.

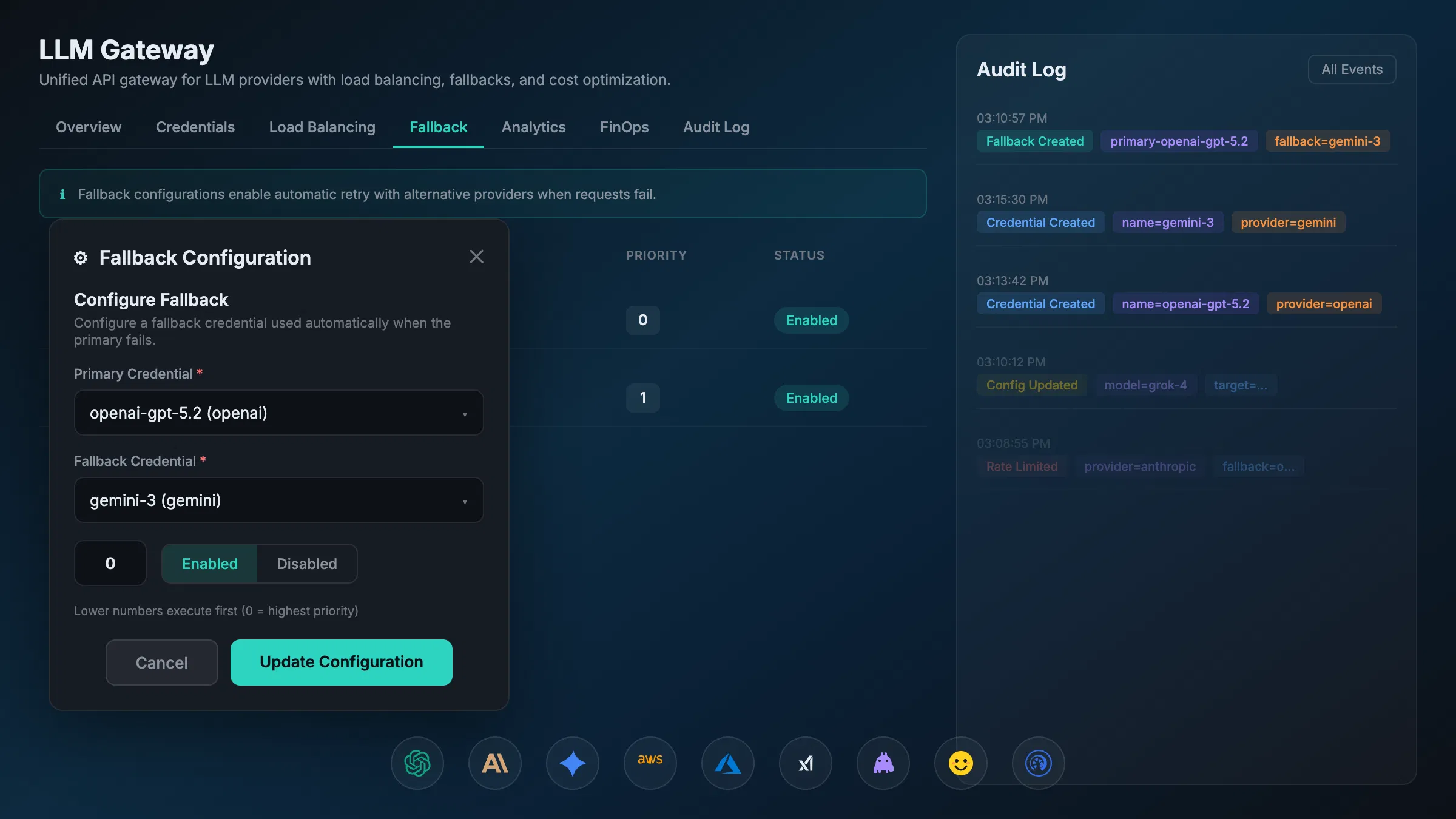

Our approach centers on the AI Gateway. By routing agent interactions through a centralized control plane, we provide the visibility that local-only installations lack.

1. Visibility and Observability

You cannot govern what you cannot see. AXIOM Studio provides a unified dashboard that tracks which agents are active, what models they are calling, and which “skills” they are utilizing. This turns “Shadow AI” into an audited corporate asset. Our AI Observability tools allow IT teams to monitor the health and behavior of distributed agents in real-time.

2. Security and PII Redaction

OpenClaw agents often handle sensitive data. AXIOM’s gateway layer automatically scans outbound prompts for PII and sensitive corporate intellectual property. We redact or mask this data before it reaches external LLM providers, ensuring that while the agent remains local, the data leakage remains zero.

3. Policy Enforcement and the EU AI Act

Compliance is non-negotiable. The EU AI Act places strict requirements on systems that interact with humans or make autonomous decisions. AXIOM Studio enforces policy at the runtime level. If an agent attempts to perform a high-risk action — such as modifying a production database — our Controlled Execution framework can require human-in-the-loop approval.

Integrating with the Modern Agent Stack

The future of enterprise AI is not a single model, but a mesh of agents talking to each other. OpenClaw is increasingly adopting the Model Context Protocol (MCP) and the Agent-to-Agent (A2A) Protocol.

These protocols allow a local OpenClaw agent to “hand off” a task to a specialized corporate agent hosted within AXIOM Studio. For example:

- An employee’s local agent identifies a need for a travel booking.

- It communicates via MCP to the AXIOM Travel Agent.

- The AXIOM agent executes the booking within corporate compliance and budget rules.

- The result is passed back to the local agent to notify the user.

This hybrid model — Local Agency + Centralized Governance — is the only sustainable path forward for the enterprise.

The Role of AXIOM Studio

We recognize the power of the open-source community. OpenClaw is a remarkable feat of engineering by Peter Steinberger — founder of PSPDFKit and now at OpenAI — and the growing open-source foundation that maintains the project. Our goal at AXIOM Studio is to provide the “Command Center” for these distributed agents.

By utilizing our LLM Gateway, enterprises can provide employees with the API keys and compute they need for OpenClaw while maintaining absolute control over costs (FinOps) and security. We offer the audit trails, the kill switches, and the policy layers that turn a personal productivity tool into a secure enterprise standard.

Summary and Executive Takeaways

OpenClaw is no longer a fringe project; it is the blueprint for the next generation of work. As local hardware becomes more capable through AMD and Apple Silicon, the shift toward local autonomous agents will only accelerate.

- OpenClaw is an orchestrator: It uses local compute and persistent memory to execute tasks across enterprise channels.

- The Governance Gap is real: Local agents create “Shadow AI” risks that standard firewalls cannot address.

- Local hardware is the driver: Initiatives like AMD’s RyzenClaw and RadeonClaw configurations make local agents performant, though cost-effective consumer options are still emerging.

- AXIOM Studio provides the guardrails: We offer the visibility, security, and AI Compliance required to scale these agents safely.

The question for leadership is no longer whether to allow autonomous agents, but how to govern them. The chaos of unmanaged local agents can be transformed into the precision of controlled execution. Get started with AXIOM for free to see how our platform can secure your AI future, or explore our pricing for details.

For those ready to move beyond experimentation, the AXIOM Studio Command Center is the next logical step. Let’s build a sovereign, secure, and highly productive agentic workforce together.

Frequently Asked Questions

What is OpenClaw and how did it become the most-starred GitHub project? OpenClaw is an open-source, self-hosted AI agent runtime created by Peter Steinberger. It surpassed 250,000 GitHub stars in roughly 60 days, overtaking React. Unlike cloud-based AI assistants, OpenClaw runs locally on user hardware, maintains persistent memory via its Memory.md framework, and executes tasks across 50+ communication channels including Slack, Discord, and email.

What are the enterprise security risks of OpenClaw? OpenClaw agents running locally can access corporate Slack channels, internal files, and credentials. Cisco found that third-party skills could perform data exfiltration and prompt injection without user awareness. Gartner analysts described the agent as “insecure by default.” Without centralized governance, these agents create blind spots in security, compliance, and cost management.

What hardware do I need to run OpenClaw locally? AMD offers two reference configurations: RyzenClaw (Ryzen AI Max+ with 128GB unified memory, starting at ~$2,700) for large context windows and multi-agent workflows, and RadeonClaw (Radeon AI PRO R9700 with 32GB VRAM, ~$1,299) delivering up to 120 tokens per second. Both run models locally via LM Studio and the llama.cpp backend. Apple Silicon Macs also support local inference.

How does OpenClaw use Model Context Protocol (MCP) and Agent-to-Agent (A2A)? OpenClaw is adopting MCP for agent-to-tool communication and A2A for agent delegation and coordination. This allows a local OpenClaw agent to hand off tasks to specialized corporate agents — for example, routing a travel booking through a compliant corporate agent. An MCP Gateway provides centralized governance for these cross-agent interactions.

How can enterprises govern OpenClaw without banning it? Rather than banning productive tools, enterprises should route agent interactions through a centralized AI Gateway that provides visibility into active agents, model usage, and skill execution. AXIOM Studio offers PII redaction on outbound prompts, policy enforcement for high-risk actions, and real-time audit logs. Get started with AXIOM for free to deploy controlled execution for your agent ecosystem.

Written by

AXIOM Team