Weekly AI Command: The Tech Launchpad (March 15-20, 2026)

This was the week the AI industry stopped debating model intelligence and started fighting over who controls the desktop. Between a transformative open-source architecture release, Meta's aggressive move into local AI agents, and OpenAI's internal reckoning with product sprawl, March 15-20 made o...

This was the week the AI industry stopped debating model intelligence and started fighting over who controls the desktop. Between a transformative open-source architecture release, Meta’s aggressive move into local AI agents, and OpenAI’s internal reckoning with product sprawl, March 15-20 made one thing clear: the next frontier isn’t the cloud. It’s your machine.

For enterprise leaders, the message is urgent. AI agents are no longer theoretical. They are shipping, installing on employee laptops, and executing terminal commands. The organizations that survive this transition will be the ones that built AI governance infrastructure before the agents arrived, not after.

Mamba-3: The Transformer Tax Is Under Siege

The most technically significant release of the week came on March 17 from researchers at Carnegie Mellon, Princeton, Together AI, and Cartesia AI.12 Mamba-3 is a new state space model (SSM) that takes direct aim at the Transformer architecture’s biggest weakness: inference cost at scale.

For years, the Transformer’s quadratic compute cost, where processing time grows exponentially with input length, has been the industry’s most expensive open secret.3 Mamba-3 attacks this with three core innovations: an improved exponential-trapezoidal discretization formula for more expressive recurrence, complex-valued state tracking that enables capabilities previous linear models couldn’t achieve, and a multi-input multi-output (MIMO) variant that boosts accuracy without slowing down generation.45

The results are concrete. At the 1.5B parameter scale, Mamba-3 SISO beats Mamba-2, Gated DeltaNet, and even Meta’s Llama-3.2-1B Transformer on prefill-plus-decode latency across all sequence lengths.1 At 16K-token sequences on an H100 GPU, it completes the job in roughly 141 seconds versus 977 seconds for the Transformer baseline, a speedup approaching 7x.6 It also achieves comparable perplexity to Mamba-2 while using only half the state size, effectively doubling inference throughput for the same hardware.26

The paper was accepted at ICLR 2026 and the code is open-sourced under Apache 2.0.67 NVIDIA and IBM have already shipped hybrid Mamba-Transformer models for enterprise deployments.6 For companies struggling with AI FinOps, this architecture represents the first serious production-ready alternative to paying the full Transformer tax on every inference call. The question is no longer whether SSMs can compete. It’s how quickly your infrastructure team can evaluate the hybrid approach.

Meta’s Manus Comes to the Desktop: The Agent Wars Go Local

On March 16, Manus, the AI agent startup Meta acquired for roughly $2 billion in December 2025,8 launched its desktop application for macOS and Windows.9 The feature at the center of the release is called My Computer, and it does exactly what the name suggests: it gives an AI agent direct access to your local files, applications, and terminal.10

Until this week, Manus operated exclusively in the cloud through a web interface.11 The desktop app changes everything. Through command-line execution, Manus can read, analyze, and edit local files, launch and control applications, build software projects, and even run inference on a local GPU.10 In one demonstration, a colleague challenged the agent to build a real-time meeting translation app in Swift entirely through terminal commands. Twenty minutes later, it had a working Mac app. No Xcode opened. No code written manually.10

The launch is a direct response to the OpenClaw phenomenon. OpenClaw, an open-source AI agent built by Austrian developer Peter Steinberger, has taken the industry by storm over the past month.8 NVIDIA CEO Jensen Huang called it the “next ChatGPT” on CNBC’s “Mad Money.”8 The key difference: OpenClaw is free and open-source under an MIT license, while Manus is a paid subscription starting at $20/month running on Meta’s proprietary model stack.12

For enterprises, the real story isn’t which agent wins. It’s that AI agents with terminal access are now shipping to consumer devices at scale. If an agent can execute shell commands on an employee’s laptop, it can access credentials, internal APIs, and sensitive data. This makes AI security and detecting shadow AI the most urgent infrastructure priority of 2026. Manus requires explicit user approval for each command, with “Allow Once” and “Always Allow” options,10 but the attack surface is fundamentally different from a chatbot in a browser tab.

OpenAI Declares “Code Red”: The Superapp Pivot

The biggest strategic story of the week broke on March 19-20 when The Wall Street Journal reported, and OpenAI confirmed, that the company is merging ChatGPT, its Codex coding platform, and its Atlas web browser into a single desktop “superapp.”1314

The move is driven by a blunt internal assessment. Fidji Simo, OpenAI’s Chief of Applications, told employees in a memo that the company had been “spreading our efforts across too many apps and stacks” and that the fragmentation was “slowing us down.”1415 In an all-hands meeting, she warned the team they “cannot miss this moment because we are distracted by side quests.”16

The catalyst is Anthropic’s rise. According to reporting from Axios, Anthropic now captures 73% of all spending among companies purchasing AI tools for the first time.16 Claude overtook ChatGPT as the most downloaded app in the United States in March 2026.16 Simo described the competitive dynamic as a “wake-up call” and the company is operating as if it’s a “code red.”15

The superapp strategy will unfold in phases. First, OpenAI will expand Codex beyond coding into broader productivity automation. Then ChatGPT and Atlas will fold into the unified environment.17 The bet is on agentic AI: systems that don’t just answer questions but autonomously handle tasks like writing code, analyzing data, and navigating the web across your entire desktop.13 OpenAI President Greg Brockman is temporarily co-leading the product overhaul alongside Simo.14

Sora, OpenAI’s standalone video generation app, serves as the cautionary tale. It briefly hit number one in the Apple App Store after its September 2025 launch, then usage flatlined. OpenAI now plans to fold it into the main ChatGPT app.15

For enterprises already managing OpenAI deployments, this signals a fundamental shift in the product surface area. A consolidated agentic app touching local files, browsers, and code environments requires AI model monitoring that can track not just API calls but autonomous actions across an entire workstation.

The Broader March Landscape: What Set the Stage

While this week’s headlines centered on agents and architecture, the events of early March established the context. Understanding the full picture matters for any enterprise planning its AI stack.

OpenAI’s GPT-5.4 launched on March 5 with native computer-use capabilities, 1M-token context windows, and a 33% reduction in factual errors over GPT-5.2.18 It’s the first OpenAI model with autonomous desktop interaction in the API, and it scored 83% on OpenAI’s GDPval benchmark for professional knowledge work.19 It tops Mercor’s APEX-Agents benchmark for law and finance, and delivers record scores on OSWorld-Verified (75.0%, surpassing the 72.4% human baseline) and WebArena-Verified (67.3%).20 The superapp announcement is the product strategy catch-up to GPT-5.4’s technical capabilities.

Google’s Gemini 3.1 Flash-Lite arrived on March 3 at $0.25 per million input tokens, delivering 2.5x faster time-to-first-token and 45% faster output speed compared to Gemini 2.5 Flash.21 It’s not an edge model — it runs via Vertex AI and Google AI Studio22 — but its pricing makes it the most aggressive cost-efficiency play in the market for high-volume inference workloads. It scored 86.9% on GPQA Diamond and achieved an Elo of 1432 on the Arena.ai leaderboard.21

Apple’s MacBook Neo, announced March 4 and released March 11, brought the A18 Pro chip (with a 16-core Neural Engine) to a $599 laptop.23 It’s the first Mac built on an iPhone A-series chip, targeting the entry-level market with on-device Apple Intelligence features.24 It’s not the AI workstation of the future, but it signals that on-device inference is now a mass-market expectation, not a premium feature. Apple also launched the MacBook Pro with M5 Pro and M5 Max, featuring a new Fusion Architecture with Neural Accelerators in every GPU core, delivering up to 4x AI performance over the previous generation.25

The Meta-AMD partnership, finalized on February 24-25, committed 6 gigawatts of AMD Instinct MI450 GPU capacity across a multi-year deployment valued at over $100 billion.2627 AMD issued Meta performance-based warrants for up to 160 million shares, creating a novel “chips-for-equity” structure that turns customer and supplier into co-investors.28 The first 1GW deployment ships in H2 2026.29 This level of hardware commitment requires LLM governance that can track model versions and policies across heterogeneous hardware at unprecedented scale.

The Shift to Agentic Infrastructure

The theme connecting every major development — from Manus Desktop to OpenAI’s superapp to GPT-5.4’s computer-use capabilities — is the same: the industry is moving from models that talk to agents that act.

Two infrastructure developments underscore this shift. ProRL Agent, released on March 19 by researchers associated with NVIDIA and integrated into NeMo Gym, provides a “rollout-as-a-service” infrastructure for training multi-turn AI agents through reinforcement learning.30 It decouples rollout orchestration from the training loop and supports standardized sandbox environments for RL training on software engineering, math, STEM, and coding tasks.30 LangGraph continues to mature as the standard for state management in cyclical, multi-step agent workflows with human-in-the-loop checkpoints.

Also notable: Anthropic launched the Claude Partner Network on March 15, committing $100 million in 2026 to help consulting firms and system integrators deploy Claude in enterprise settings.31 And xAI raised $20 billion in its Series E, supporting continued expansion of the Colossus supercomputer infrastructure, now exceeding one million H100 GPU equivalents.32

These aren’t tools for building chatbots. They’re tools for building systems that plan, execute, and verify autonomous work. The distinction matters: you don’t manage agents with API keys and rate limits. You manage them with roles, permissions, audit trails, and oversight — the same way you manage employees. This is exactly the gap that agentic AI development frameworks are designed to fill.

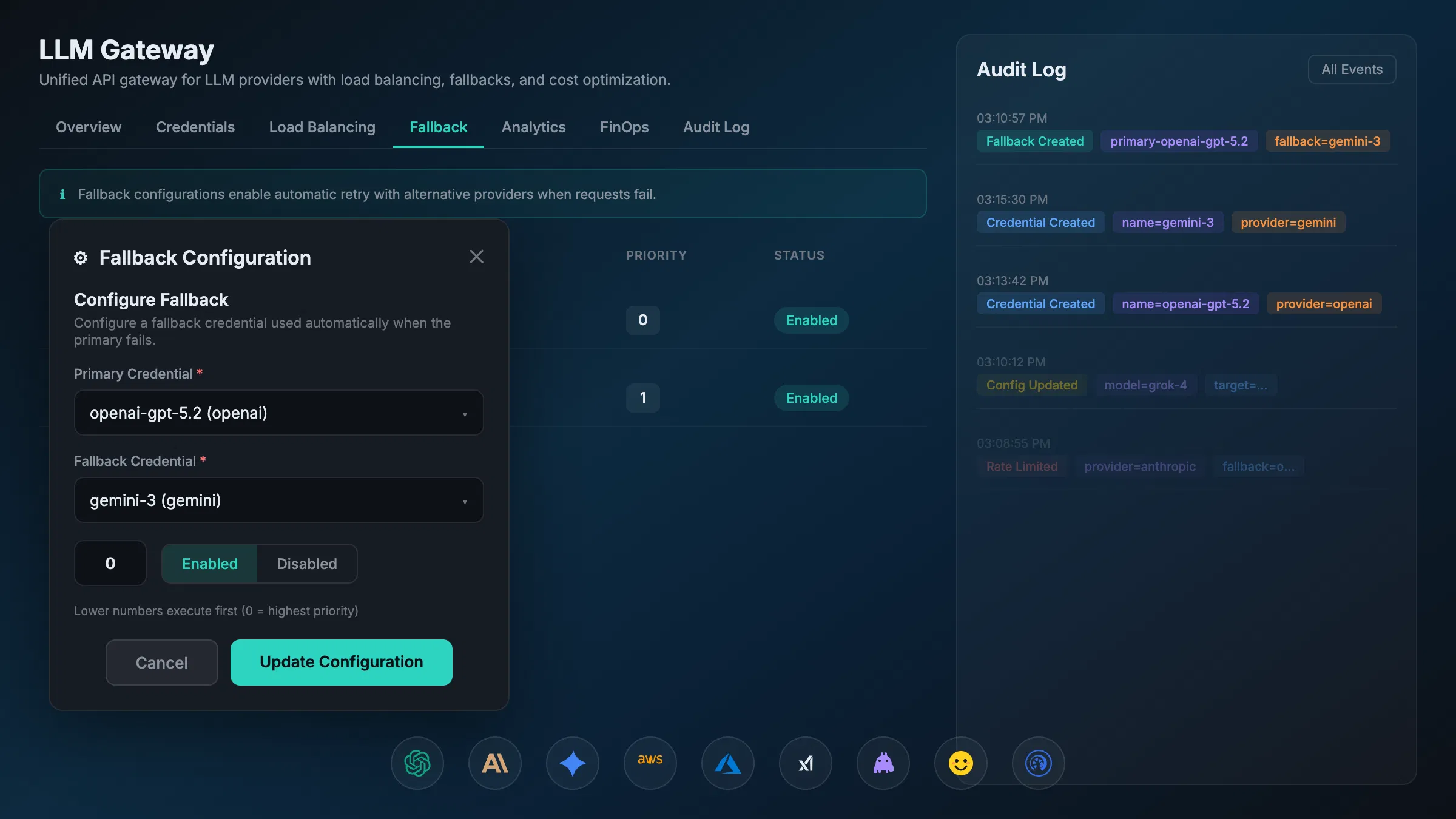

And if agents are the employees, then MCP (Model Context Protocol) and A2A (Agent-to-Agent) are the office protocols. MCP is becoming the standard integration layer for agent-to-tool communication, while A2A defines how agents delegate and coordinate across complex workflows. At AXIOM Studio, our MCP Gateway and A2A Gateway exist specifically to give enterprises a single control plane for these interactions. Without protocol standardization and centralized governance, your agent ecosystem will quickly become Shadow AI at scale: unmonitored, unaudited, and ungovernable.

Summary and Key Takeaways

The week of March 15-20, 2026, confirmed that the industry has crossed a line. AI agents are no longer demos. They are products, shipping to desktops, executing shell commands, and forcing the largest AI company on earth into a strategic pivot.

- The Transformer Monopoly Is Breaking: Mamba-3’s open-source release under Apache 2.0, with 7x inference speedups6 and acceptance at ICLR 2026,3 makes state space models a legitimate production architecture. Hybrid SSM-Transformer deployments are already in the field via NVIDIA and IBM.6

- Agents Have Left the Browser: Manus Desktop8 and OpenClaw prove that local AI agents with file system and terminal access are now a shipping product category. The security implications are enormous.

- OpenAI Is Restructuring Around Agents: The superapp consolidation,14 driven by Anthropic capturing 73% of first-time enterprise AI spend,16 signals that the next competitive battleground is autonomous desktop productivity, not chat.

- Governance Is the Moat: When agents can execute code, browse the web, manage files, and talk to each other, a centralized AI governance platform is the only way to maintain visibility and control. This is no longer a nice-to-have. It’s infrastructure.

The tech launchpad has been built. The question for your organization is no longer if you will deploy AI agents, but whether you have the governance architecture to manage them before they become unmanageable.

If you’re ready to move from experimentation to governed production, get started with AXIOM for free or start with the architecture that supports it. Let’s get to work.

Frequently Asked Questions

What is Mamba-3 and why does it matter for enterprise AI costs? Mamba-3 is a state space model that achieves up to 7x faster inference than Transformer architectures at comparable quality. Accepted at ICLR 2026 and open-sourced under Apache 2.0, it offers enterprises a production-ready alternative to paying full Transformer compute costs on every inference call, with hybrid SSM-Transformer deployments already shipping from NVIDIA and IBM.

What are the security risks of Meta’s Manus desktop AI agent? Manus Desktop gives AI agents direct access to local files, applications, and terminal commands on employee machines. This means an agent can potentially access credentials, internal APIs, and sensitive data. While it requires explicit user approval per command, the attack surface is fundamentally different from browser-based chatbots and creates significant shadow AI governance challenges.

Why is OpenAI merging ChatGPT, Codex, and Atlas into a superapp? OpenAI’s Chief of Applications Fidji Simo described internal product fragmentation as “slowing us down,” with Anthropic now capturing 73% of first-time enterprise AI spend. The superapp consolidation aims to create a unified agentic desktop environment — but for enterprises, it means a single app touching local files, browsers, and code environments, requiring comprehensive AI observability beyond API-level monitoring.

How does GPT-5.4 computer use change enterprise security requirements? GPT-5.4 introduces native desktop interaction capabilities — viewing screens, understanding spatial elements, and executing clicks and keystrokes autonomously. This shifts AI from API-based interactions to UI-level automation, requiring enterprises to govern not just what data models access via API, but what they can see and do on employee workstations.

How can enterprises govern the proliferation of local AI agents? As agents like Manus, OpenClaw, and OpenAI’s superapp ship to consumer devices, enterprises need a centralized AI governance layer that provides visibility into agent activity, enforces security policies, and maintains audit trails across all providers. Get started with AXIOM for free to deploy unified governance across your entire agent ecosystem.

Footnotes

-

Together AI Blog — “Mamba-3”, Published March 17, 2026. ↩ ↩2

-

Princeton Today — “Open source Mamba 3 arrives to surpass Transformer architecture”, Published March 17, 2026. ↩ ↩2

-

OpenReview — “Mamba-3: Improved Sequence Modeling using State Space Principles”, ICLR 2026. ↩ ↩2

-

MarkTechPost — “Meet Mamba-3: A New State Space Model Frontier”, Published March 18, 2026. ↩

-

arXiv — Mamba-3 Paper (2603.15569), Published March 2026. ↩

-

WinBuzzer — “New Mamba-3 AI Model Beats Transformers by 4%, Runs 7x Faster”, Published March 18, 2026. ↩ ↩2 ↩3 ↩4 ↩5 ↩6

-

GitHub — state-spaces/mamba, Apache 2.0 License. ↩

-

CNBC — “Meta’s Manus launches desktop app to bring its AI agent onto personal devices”, Published March 18, 2026. ↩ ↩2 ↩3 ↩4

-

The Next Web — “Meta’s Manus AI agent arrives on your desktop”, Published March 18, 2026. Launch date reported as March 16. ↩

-

Manus Blog — “Introducing My Computer: When Manus Meets Your Desktop”, Published March 2026. ↩ ↩2 ↩3 ↩4

-

Storyboard18 — “Meta-owned Manus launches desktop app with local AI agent access”, Published March 18, 2026. ↩

-

Digital Trends — “Meta brings Manus AI agent to your Windows PC and Mac”, Published March 18, 2026. ↩

-

MacRumors — “OpenAI ‘Superapp’ to Merge ChatGPT, Codex, and Atlas Browser”, Published March 20, 2026. ↩ ↩2

-

CNBC — “OpenAI to create desktop super app, combining ChatGPT app, browser and Codex app”, Published March 19, 2026. ↩ ↩2 ↩3 ↩4

-

The Decoder — “OpenAI plans to merge ChatGPT, Codex, and Atlas browser into a single desktop superapp”, Published March 20, 2026. ↩ ↩2 ↩3

-

WinBuzzer — “OpenAI to Merge ChatGPT, Codex, Atlas Browser Into Superapp”, Published March 20, 2026. ↩ ↩2 ↩3 ↩4

-

TechSpot — “OpenAI is building a desktop superapp that combines ChatGPT, Atlas, and Codex”, Published March 20, 2026. ↩

-

OpenAI — “Introducing GPT-5.4”, Published March 5, 2026. ↩

-

TechCrunch — “OpenAI launches GPT-5.4 with Pro and Thinking versions”, Published March 5, 2026. ↩

-

Cyber Security News — “OpenAI Launches GPT-5.4 With Advanced Reasoning, Coding, and Computer-Use Capabilities”, Published March 5, 2026. ↩

-

Google Blog — “Gemini 3.1 Flash-Lite: Our most cost-effective AI model yet”, Published March 3, 2026. ↩ ↩2

-

Google Cloud Documentation — “Gemini 3.1 Flash-Lite”, Updated March 16, 2026. ↩

-

Apple Newsroom — “Say hello to MacBook Neo”, Published March 4, 2026. ↩

-

Wikipedia — “MacBook Neo”, Accessed March 22, 2026. ↩

-

Apple Newsroom — “Apple introduces MacBook Pro with all-new M5 Pro and M5 Max”, Published March 4, 2026. ↩

-

ServeTheHome — “AMD and Meta Announce a Massive 6GW Deal”, Published February 25, 2026. ↩

-

GIGAZINE — “Meta agrees to purchase up to 6GW of AMD Instinct GPUs for over $100 billion”, Published February 25, 2026. ↩

-

Futuriom — “AMD Strikes Meta Deal for Mutual Innovation”, Published February 2026. ↩

-

AMD Newsroom — “AMD and Meta Announce Expanded Strategic Partnership to Deploy 6 Gigawatts of AMD GPUs”, Published February 24, 2026. ↩

-

arXiv — “ProRL Agent: Rollout-as-a-Service for RL Training of Multi-Turn LLM Agents” (2603.18815), Published March 19, 2026. ↩ ↩2

-

Daily AI Digest — “AI Briefing for March 15, 2026”, Published March 15, 2026. ↩

-

NeuralBuddies — “AI News Recap: March 20, 2026”, Published March 20, 2026. ↩

Written by

AXIOM Team