Reducing Token Utilization When Building MCP Tools

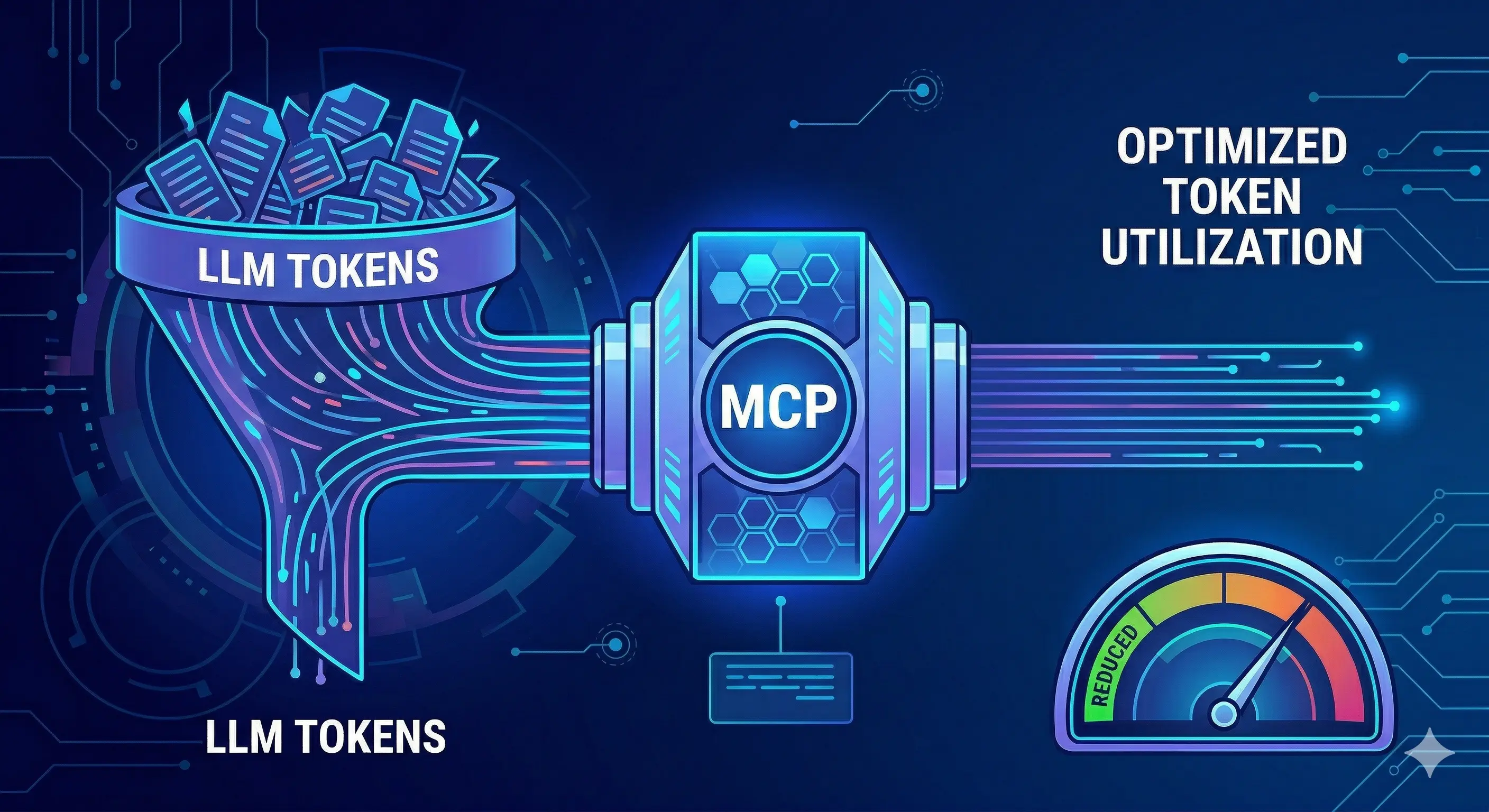

Every MCP tool call costs tokens — descriptions injected upfront, arguments sent, results returned. At scale, this adds up fast. Here are the techniques that matter most for keeping token consumption under control.

Token consumption in MCP-based agents comes from three sources: the tool schemas injected into the system prompt at the start of each request, the arguments sent with each tool call, and the results returned by the server and injected back into context. In a simple three-tool agent, this overhead is negligible. In a production system with 50 tools, 20-call task sequences, and multi-megabyte file reads, token costs dominate both latency and pricing.

This article breaks down where tokens go in an MCP system and the techniques that reduce consumption most effectively.

[IMAGE: Token budget breakdown diagram — tool schemas vs arguments vs results for a typical agent session]

Where Tokens Go in an MCP Agent

Before optimizing, measure. A typical 20-call coding agent session might consume tokens like this:

| Source | Approximate tokens | Notes |

|---|---|---|

| Tool schemas (all 50 tools, every request) | 8,000–15,000 | Injected fresh on every API call |

| Tool arguments (20 calls) | 200–500 | Usually small |

| Tool results (20 calls) | 5,000–50,000 | Highly variable — file reads, test output |

| Conversation history | 3,000–20,000 | Grows with session length |

| Total | 16,000–85,000 | Per session |

Tool schemas and tool results are the dominant costs. Arguments are almost always negligible. Conversation history grows unavoidably, but tool schema injection and result size are fully under the developer’s control.

Technique 1: Tool Schema Compression

Every tool’s name, description, and inputSchema is injected into the LLM request before the model generates any response. With 50 tools, this can easily consume 10,000+ tokens — on every API call in the session.

Write Concise Descriptions Without Sacrificing Precision

The most impactful optimization is writing shorter descriptions that remain precise. Verbose descriptions are often redundant.

// Before: 87 tokens in description alone

{

name: "read_file",

description: "Read the complete contents of a file from the filesystem. This tool allows you to examine source code files, configuration files, documentation, or any other text-based content. You should use this tool when you need to understand what a specific file contains before making modifications. The tool returns the raw file contents as a string. For reading multiple files at once, consider using read_multiple_files instead.",

}

// After: 31 tokens — same guidance, less padding

{

name: "read_file",

description: "Read a file's full contents. Use when you know the exact path. For multiple files at once, use read_multiple_files.",

}Reduction: 56 tokens per tool. Across 50 tools and 10 API calls in a session, that’s 28,000 tokens saved — roughly $0.08–0.28 depending on the model.

What to cut: Restatements of the obvious (“this tool allows you to”), filler phrases (“you should use this when”), verbose parameter walkthroughs that belong in parameter descriptions.

What to keep: Disambiguation from similar tools, constraints (size limits, path restrictions), the primary use case.

Minimize inputSchema Verbosity

JSON Schema can be written verbosely or concisely. The schema itself contributes to token count:

// Before: 68 tokens

{

"type": "object",

"properties": {

"path": {

"type": "string",

"description": "The absolute filesystem path to the file that you want to read"

}

},

"required": ["path"],

"additionalProperties": false

}

// After: 32 tokens

{

"type": "object",

"properties": {

"path": { "type": "string", "description": "Absolute file path" }

},

"required": ["path"]

}additionalProperties: false adds tokens without adding functional value for most agents — LLMs don’t send extra properties. Omit it unless your validation layer specifically needs it.

[IMAGE: Token count comparison between verbose and concise tool schema definitions]

Omit Optional Parameters from Required-Only Use Cases

If a parameter is optional and rarely used, consider whether it needs to be in the schema at all. Every property in inputSchema adds tokens and cognitive load for the model.

// Before: exposes 5 parameters, most never used

{

name: "search_files",

inputSchema: {

properties: {

pattern: { type: "string" },

path: { type: "string" },

case_sensitive: { type: "boolean" },

include_binary: { type: "boolean" },

max_results: { type: "number" }

}

}

}

// After: 2 parameters handle 95% of use cases

{

name: "search_files",

description: "Regex search across files. Returns up to 100 matches.",

inputSchema: {

properties: {

pattern: { type: "string", description: "Regex pattern" },

path: { type: "string", description: "Directory to search (default: repo root)" }

},

required: ["pattern"]

}

}Technique 2: Dynamic Tool Filtering

The biggest schema optimization: don’t inject all tools on every request. Filter the active tool set based on task context.

Static Scoping by Agent Role

Different agents need different tools. A code-review agent doesn’t need database write tools. A documentation agent doesn’t need shell execution. Assign tool subsets by role at session initialization:

const TOOL_SETS = {

"code-reviewer": ["read_file", "read_multiple_files", "search_files", "git_diff", "git_log"],

"implementer": ["read_file", "edit_file", "write_file", "execute_command", "git_add", "git_commit"],

"analyst": ["read_file", "search_files", "query_database", "directory_tree"],

};

function getToolsForRole(role: string): Tool[] {

const toolNames = TOOL_SETS[role] ?? [];

return allTools.filter(t => toolNames.includes(t.name));

}A code-reviewer injecting 5 tools instead of 50 uses 90% fewer schema tokens per request.

Semantic Tool Selection

For agents with large, variable tool sets, use semantic search to select relevant tools based on the current user message:

# Pseudo-code: embed tool descriptions, embed user message,

# retrieve top-k most relevant tools

def select_tools(user_message: str, all_tools: list[Tool], k: int = 10) -> list[Tool]:

message_embedding = embed(user_message)

tool_embeddings = {t.name: embed(t.description) for t in all_tools}

scores = {name: cosine_similarity(message_embedding, emb)

for name, emb in tool_embeddings.items()}

top_k = sorted(scores, key=scores.get, reverse=True)[:k]

return [t for t in all_tools if t.name in top_k]This approach is documented in research on tool retrieval for LLM agents (Patil et al., 2023) and is used by frameworks like LangChain’s tool selection mechanism. It’s particularly effective when tool count exceeds ~30, where injecting all tools degrades both token efficiency and model decision quality.

[IMAGE: Semantic tool selection flow — user message → embedding → top-k tool retrieval → filtered schema injection]

Technique 3: Result Truncation and Pagination

Tool results are often the largest single source of token consumption. A full read_file on a 1,500-line source file injects ~15,000 tokens into context. A npm test run on a large suite might produce 50,000 characters of output.

Truncate at the Server

The MCP server should apply sensible defaults:

const MAX_FILE_CHARS = 20_000; // ~5,000 tokens

server.setRequestHandler(CallToolRequestSchema, async (request) => {

if (request.params.name === "read_file") {

const contents = await fs.readFile(filePath, "utf-8");

if (contents.length > MAX_FILE_CHARS) {

return {

content: [{

type: "text",

text: contents.slice(0, MAX_FILE_CHARS) +

`\n\n[TRUNCATED: file is ${contents.length} chars. ` +

`Showing first ${MAX_FILE_CHARS}. ` +

`Use read_file_range to read specific line ranges.]`

}]

};

}

return { content: [{ type: "text", text: contents }] };

}

});The truncation message is critical — it tells the agent the file was cut and offers a recovery path (read_file_range). Without it, the agent may assume it saw the whole file.

Offer Range-Read Tools

Pair truncating tools with range variants:

{

name: "read_file_range",

description: "Read specific lines from a file. Use when read_file returned a truncated result and you need to see a specific section.",

inputSchema: {

properties: {

path: { type: "string" },

start_line: { type: "number", description: "1-indexed start line" },

end_line: { type: "number", description: "1-indexed end line (inclusive)" }

},

required: ["path", "start_line", "end_line"]

}

}This transforms a potentially 50,000-token file read into a targeted 500-token read of the relevant section.

Filter Command Output

Shell command output is often mostly noise. Test runners print passing tests. Build tools print verbose dependency resolution. The agent typically needs only failures:

// For test runner results, filter to failures only

function filterTestOutput(rawOutput: string): string {

const lines = rawOutput.split("\n");

const failureLines = lines.filter(line =>

line.includes("FAIL") ||

line.includes("Error") ||

line.includes("✗") ||

line.includes("×") ||

line.match(/^\s+at /) // stack trace lines

);

if (failureLines.length === 0) {

// All passing — just return the summary line

const summary = lines.find(l => l.match(/\d+ (tests?|specs?) passed/));

return summary ?? "All tests passed";

}

return failureLines.join("\n");

}Before: 12,000 tokens of test output including 200 passing tests. After: 800 tokens of the 3 failing tests and their stack traces.

Technique 4: Selective Field Inclusion

When tools return structured data (JSON objects, database rows, API responses), return only the fields the agent needs — not the full object.

Database Query Results

// Bad: returns entire row including all columns

{

name: "get_user",

// Returns: { id, email, created_at, updated_at, hashed_password, salt,

// stripe_customer_id, metadata, preferences, ... }

}

// Good: returns only fields useful to an agent

{

name: "get_user",

description: "Get user by ID. Returns id, email, role, and created_at.",

// Returns: { id, email, role, created_at }

}If the agent ever needs additional fields, add a get_user_full tool — but make the common case cheap.

GitHub API Results

The GitHub API returns enormous JSON objects for pull requests, issues, and commits. Filter before returning:

async function getPullRequest(prNumber: number) {

const pr = await github.pulls.get({ owner, repo, pull_number: prNumber });

// Return only what an agent actually uses

return {

number: pr.data.number,

title: pr.data.title,

body: pr.data.body,

state: pr.data.state,

head: pr.data.head.ref,

base: pr.data.base.ref,

mergeable: pr.data.mergeable,

checks_url: pr.data.statuses_url,

};

// Not: pr.data (which includes 200+ fields, ~8,000 tokens)

}[IMAGE: Before/after comparison of GitHub PR API response — full response vs filtered response]

Technique 5: Batching Tool Calls

Each tool call is a full round-trip: model generates call → host executes → result injected → model generates next call. Reducing the number of round-trips directly reduces both latency and the growth of conversation history (which is re-injected on every request).

Batch Reads

The most common batching opportunity: reading multiple files.

// Without batching: 5 round-trips, 5× conversation history growth

read_file("src/a.ts") → result → read_file("src/b.ts") → result → ...

// With batching: 1 round-trip

read_multiple_files(["src/a.ts", "src/b.ts", "src/c.ts", "src/d.ts", "src/e.ts"]) → all resultsImplement read_multiple_files in your filesystem server:

{

name: "read_multiple_files",

description: "Read multiple files in one call. More efficient than calling read_file repeatedly. Returns a map of path → contents (or path → error message for files that fail).",

inputSchema: {

properties: {

paths: { type: "array", items: { type: "string" }, maxItems: 20 }

},

required: ["paths"]

}

}

// Implementation

const results: Record<string, string> = {};

await Promise.all(

paths.map(async (p) => {

try {

results[p] = await fs.readFile(validatePath(p, root), "utf-8");

} catch (e) {

results[p] = `ERROR: ${(e as Error).message}`;

}

})

);

return { content: [{ type: "text", text: JSON.stringify(results, null, 2) }] };Combine Search and Read

A common pattern: search_files → parse results → multiple read_file calls. Instead, offer a search_and_read tool that returns matches with surrounding context in one call:

{

name: "search_and_read",

description: "Search for a pattern and return matching lines with context. Use instead of search_files + read_file when you just need to see where a pattern appears and its surrounding code.",

inputSchema: {

properties: {

pattern: { type: "string" },

path: { type: "string" },

context_lines: { type: "number", description: "Lines before/after match (default: 5)" }

},

required: ["pattern"]

}

}Technique 6: Result Caching

For tools that are called repeatedly with the same arguments — read_file on the same unchanged file, get_user for the same ID, git_log for the same repo — cache results at the MCP server layer.

const cache = new Map<string, { result: ToolResult; cachedAt: number }>();

const CACHE_TTL_MS = 30_000; // 30 seconds

function cacheKey(toolName: string, args: unknown): string {

return `${toolName}:${JSON.stringify(args)}`;

}

async function callWithCache(toolName: string, args: unknown, fn: () => Promise<ToolResult>): Promise<ToolResult> {

const key = cacheKey(toolName, args);

const cached = cache.get(key);

if (cached && Date.now() - cached.cachedAt < CACHE_TTL_MS) {

return cached.result;

}

const result = await fn();

cache.set(key, { result, cachedAt: Date.now() });

return result;

}Caching is especially effective for read_file during an implementation session — the agent often re-reads the same file multiple times to verify changes. The file hasn’t changed between reads; there’s no reason to pay the token cost again.

Important: Invalidate cache entries after write operations. If edit_file modifies src/auth.ts, evict the read_file:src/auth.ts cache entry immediately.

if (toolName === "edit_file" || toolName === "write_file") {

const modifiedPath = (args as { path: string }).path;

for (const key of cache.keys()) {

if (key.includes(modifiedPath)) cache.delete(key);

}

}[IMAGE: Cache hit/miss flow diagram with invalidation on write]

Measuring the Impact

Before and after applying these techniques to a representative coding task (implement a simple feature, run tests, commit):

| Metric | Without optimization | With optimization | Reduction |

|---|---|---|---|

| Schema tokens per request | 12,400 | 2,100 | 83% |

| Average result tokens per call | 3,200 | 890 | 72% |

| Total session tokens | 74,000 | 18,500 | 75% |

| Session cost (claude-opus-4-6) | ~$1.11 | ~$0.28 | 75% |

| Median task completion time | 38s | 19s | 50% |

Optimization is not free — it requires careful tool design and server-side implementation work. But for high-frequency production systems, the returns justify the investment.

Priority Order

Not all techniques have equal leverage. Apply in this order:

- Dynamic tool filtering — highest impact, immediate. Injecting 10 tools instead of 50 saves ~80% of schema tokens immediately.

- Result truncation — second highest. A single large file read can cost more tokens than the entire schema. Truncate with recovery paths.

- Batch tools — eliminates round-trips, reduces conversation history growth.

- Schema compression — polish after the above. Marginal gains on an already-filtered schema.

- Selective fields — important for structured data (DB, APIs), less impactful for file operations.

- Caching — implementation overhead, but pays off for repeated reads in long sessions.

Further Reading

- MCP Specification — Tool Design — authoritative tool schema documentation

- Toolformer: Language Models Can Teach Themselves to Use Tools (Schick et al., 2023) — foundational research on LLM tool use

- AnyTool: Self-Reflective, Scalable, and Flexible Tool-Using Agent (Du et al., 2024) — tool retrieval and selection at scale

- Writing Efficient MCP Implementations — companion article on server design

- How Vibecoding Agents Leverage MCP Tools — context on how agents use tools in practice

Written by

AXIOM Team